What Is a Data Lakehouse?

A data lakehouse is a modern data architecture that combines the benefits of data lakes and data warehouses into a unified platform.

A data lakehouse supports structured, semi-structured, and unstructured data, while enabling BI and machine learning on a single platform through features like ACID transactions, governance, and scalable compute.

Benefits of Data Lakehouses

Data lakehouses provide a unified environment where analysts and AI engineers can work with real-time and historical data in one place.

Key benefits include:

- Single data repository: Store, manage, and access clean, integrated data for SQL, ML, BI, and analytics in one system, reducing operational overhead.

- Support for diverse data types: Handle structured, semi-structured, and unstructured data, including logs, images, videos, text, and IoT data.

- Open and standardized formats: Use open file formats like Parquet or ORC and table formats like Delta Lake, Iceberg, or Hudi for flexibility and interoperability.

- Separation of storage and compute: Store data cheaply in cloud object storage while querying it with various compatible engines, improving scalability and cost-efficiency.

- Streaming and batch support: Process real-time and batch data in the same architecture, enabling high-throughput ingestion and hybrid workflows.

- Concurrent transactions: Allow multiple users or pipelines to read and write data concurrently with full ACID compliance and no data loss.

- Strong governance: Centralized access control, auditing, and data sharing policies ensure secure and compliant data usage across teams.

How Data Lakehouses Work

A data lakehouse blends the storage efficiency of a data lake with the structure and performance of a data warehouse.

Key components include:

- Centralized data storage: Uses low-cost cloud object storage to ingest and store large volumes of raw, diverse data types without upfront schema requirements.

- Metadata and schema layer: Adds structured metadata and table definitions on top of raw files, enabling SQL queries and structured data analysis.

- Support for ACID transactions: Integrates warehouse-style consistency by enabling reliable, concurrent read/write operations with transactional guarantees.

- Built-in governance tools: Offers features like access control, lineage tracking, and audit logging to support secure, enterprise-grade data management.

- Unified data access: Allows users across the org—analysts, engineers, scientists—to query and collaborate on the same up-to-date datasets.

Core Features of Data Lakehouses

Data lakehouses combine flexible storage with structured access and governance.

Key features include:

- Unified low-cost storage: Stores structured, semi-structured, and unstructured data in cloud object storage for affordability and scalability.

- Built-in data management: Applies schemas, supports ETL, and ensures data cleansing without needing external tools or platforms.

- ACID transaction support: Enables safe concurrent reads and writes with full transactional integrity across shared datasets.

- Standardized formats: Uses open formats like Parquet and ORC that work seamlessly across various engines and tools.

- Real-time streaming: Supports continuous ingestion and analysis of streaming data alongside historical batch data.

- Decoupled compute and storage: Scales each layer independently, supporting diverse workloads without resource contention.

- Open engine compatibility: Works with engines like Apache Spark and analytics tools like BigQuery for flexible querying.

- End-to-end governance: Manages metadata, access controls, and data lineage centrally for compliance and consistency.

- BI-ready architecture: Allows direct BI tool access to lakehouse data, reducing duplication and improving reporting speed.

Data Warehouse vs. Data Lake vs. Data Lakehouse

Understanding the differences between these architectures helps choose the right data solution. A lakehouse combines their strengths to support modern analytics needs.

- Data warehouse: Designed for structured data and fast SQL-based analytics; ideal for BI and dashboards but often costly and inflexible.

- Data lake: Offers scalable, low-cost storage for raw data of all types, but lacks strong performance, schema control, and governance.

- Data lakehouse: Merges the reliability of warehouses with the scalability of lakes; supports BI, ML, and real-time analytics from one system.

Challenges of Data Lakehouses

While lakehouses offer a powerful unified data architecture, they also come with technical and operational complexities that teams must address.

Key challenges include:

- High implementation complexity: Building a lakehouse from scratch involves configuring storage, catalogs, compute, governance, and streaming pipelines.

- Integration with AI: Modern lakehouses must support AI and ML workloads, requiring tight alignment with tools for training, inference, and feature engineering.

- Tool interoperability: Ensuring compatibility with various engines like Spark, Presto, or BigQuery requires careful planning and vendor support.

- Operational overhead: Managing metadata, versioning, access controls, and performance optimization demands ongoing engineering effort.

- Out-of-box vs. custom builds: Organizations must choose between fully managed solutions or stitching together open components, each with trade-offs.

Real-World Use Cases of Data Lakehouses

Data lakehouses serve diverse industries by unifying storage and analytics. Their ability to handle structured and unstructured data makes them valuable for both operational and strategic decision-making.

- Healthcare: Store and analyze EHRs, device data, and population metrics to improve patient outcomes and streamline care delivery.

- Finance: Support real-time analysis of transactions, risk models, and fraud detection to drive smarter investment decisions.

- Retail: Combine POS data, customer interactions, and web logs to optimize marketing, pricing, and personalized shopping experiences.

- Manufacturing: Integrate process logs, IoT sensor data, and supply chain info to enhance production efficiency and reduce downtime.

- Government: Aggregate public health, tax, and civic data to inform policy-making and improve transparency in citizen services.

Explore Data Lakehouses in Detail

Data lakehouses are becoming the new standard for unified analytics infrastructure. Whether you’re consolidating your warehouse and data lake or starting fresh, lakehouses give you the flexibility to handle modern data at scale. Look into Data Lakehouse: A Guide to Modern Data Architecture in 2025 to explore practical examples, architecture guides, and step-by-step tutorials on building a lakehouse using BigQuery and open formats.

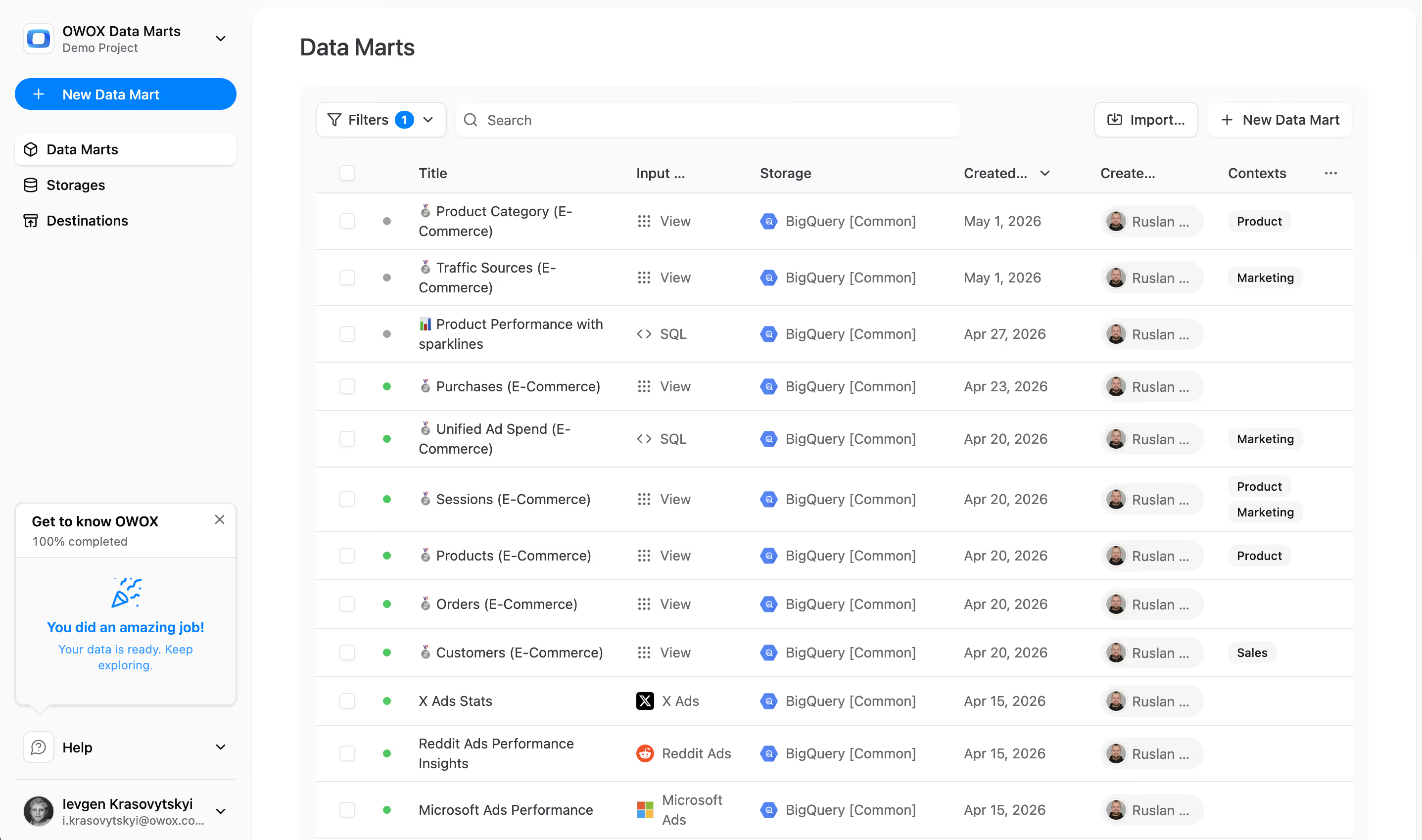

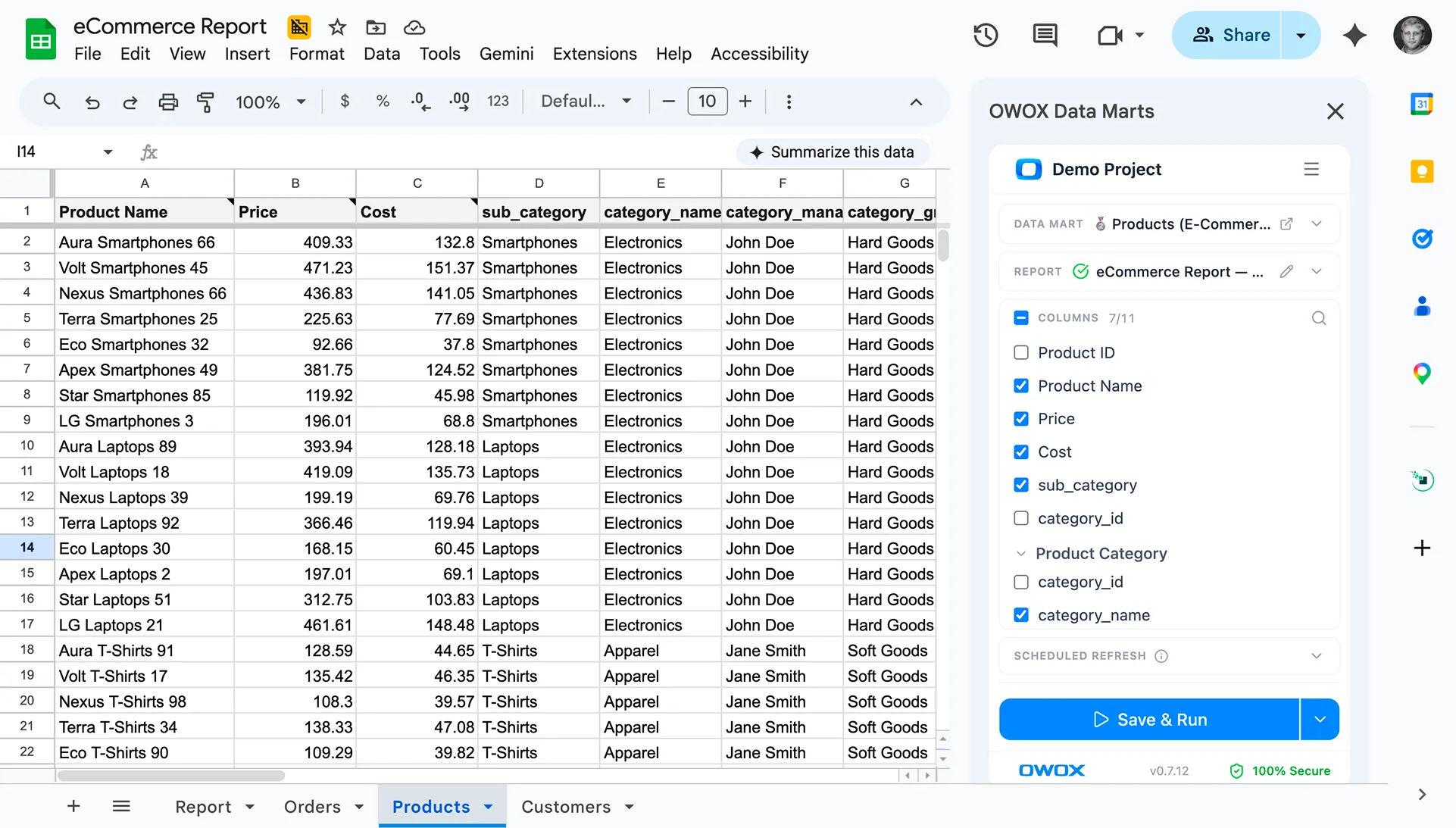

Enhance Your Data Handling with OWOX BI SQL Copilot for BigQuery

Managing lakehouse data often requires complex SQL for modeling and transformations. With OWOX BI SQL Copilot, you can use natural prompts to generate and debug SQL for BigQuery. Whether querying large datasets or refining semi-structured logs, the Copilot helps write faster, cleaner SQL, reducing manual effort and helping analysts focus on insights, not syntax.

Finally, a tool that doesn't ask business users to learn a new dashboarding UI. Our marketing team already knows Sheets. OWOX just delivers the right data.

Joinable data marts concept was the thing that sold us. We can now use the semantic layer without building one.

Self-hosted the OSS version on Digital Ocean. Zero vendor lock-in. Contributed a Shopify connector back in week two.