Why Snowflake Became the Most Popular Cloud Data Warehouse

Discover the technical and business factors behind Snowflake’s rise as the leading cloud data warehouse and its impact on analytics strategies.

Over the past decade, Snowflake has gone from an interesting alternative to legacy data warehouses to the de facto standard for modern analytics teams. It didn’t win by being “just another cloud database.” It won by forcing a reset in how organizations think about scale, cost, and usability in their analytics stack.

.png)

Instead of lifting-and-shifting an on‑prem data warehouse into the cloud, Snowflake re‑designed core architectural and economic assumptions: storage and compute are separated, workloads can be isolated yet share the same data, and you pay for what you actually use.

For data leaders under pressure to deliver faster insights with leaner teams, that combination proved hard to ignore.

Today, Snowflake is rarely evaluated in isolation. It sits at the center of a broader analytics ecosystem: ingestion tools, transformation frameworks, BI platforms, reverse ETL, and governance layers.

For many organizations, the strategic question is no longer “Should we use Snowflake?” but “How do we build reliable, governed, self‑service analytics on top of Snowflake without drowning in complexity and costs?”

This article breaks down the technical, economic, and ecosystem factors that led Snowflake to its current position. We’ll look at:

- How Snowflake’s architecture changed expectations around elasticity and concurrency

- Why its pricing and consumption model resonated with data and finance teams

- How ecosystem maturity and skills availability reinforced its dominance

- What all of this means when you’re building governed, self‑service analytics for business stakeholders

Along the way, we’ll connect these trends to practical decisions facing data teams: warehouse design, cost management, data modeling, and the choice of tooling for analytics and activation.

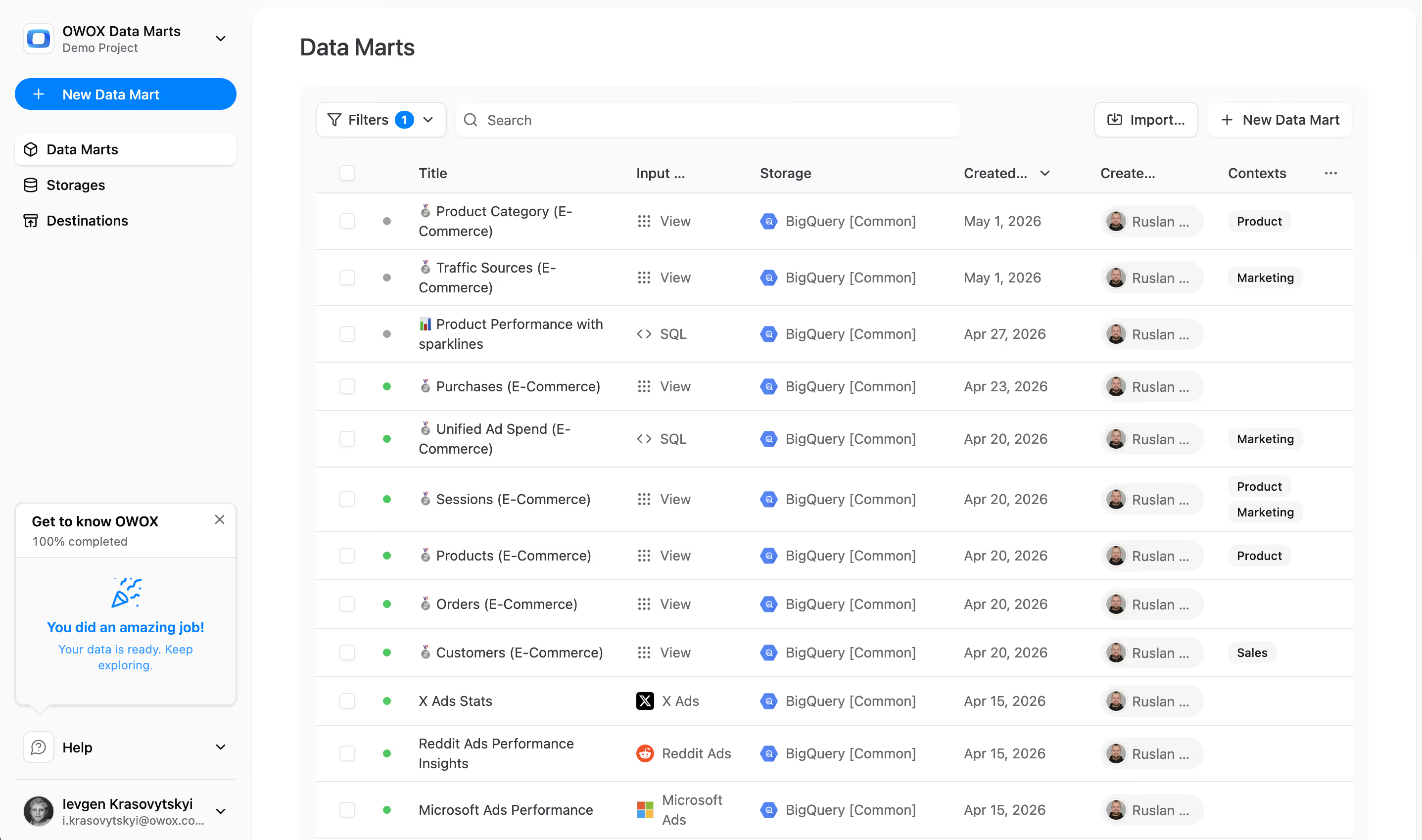

For teams centralizing their data in Snowflake, one of the hardest problems isn’t standing up the warehouse - it’s operationalizing trusted, business‑ready data marts that different teams can use without breaking shared definitions or exploding warehouse costs.

That’s where solutions like OWOX Data Marts can help you automate and govern a reporting layer on top of Snowflake data, while keeping full transparency and control over data transformations and spend.

Snowflake’s Rapid Rise in the Analytics World: Why Timing and Market Fit Made All the Difference

Snowflake’s journey from an unknown startup to the default cloud data warehouse didn’t happen in a vacuum. It arrived at a moment when data teams were stuck between aging, rigid on‑premise warehouses and first‑generation “cloudified” solutions that still carried many of the same limitations.

Around its launch, organizations were already migrating core workloads to the cloud, but analytics lagged behind.

BI teams were wrestling with performance bottlenecks, concurrency issues, and painful infrastructure management.

At the same time, executives were demanding fresher data, more granular reporting, and experimentation‑driven product and marketing strategies.

Snowflake stepped into this gap with an architecture and business model that were aligned with where the market was going, not where it had been. Understanding how that alignment played out is critical for data leaders designing long‑term platform strategies today.

In short, Snowflake’s rapid adoption was fueled by a convergence of technology maturity, cloud economics, and changing expectations about what a data warehouse should do for the business.

From Niche Warehouse to Default Choice for Modern Data Teams

In its early years, Snowflake was a tool for forward‑leaning analytics teams willing to experiment with a new kind of warehouse.

Its pitch was simple but powerful: elastic compute, simplified operations, and a SQL‑friendly interface that didn’t require exotic skills.

What differentiated Snowflake early on was not just features, but what those features enabled:

- Analysts could spin up isolated virtual warehouses for ETL, BI, and data science without stepping on each other’s queries.

- Teams no longer had to manage indexes, vacuuming, or physical clustering in the same way as traditional systems.

- Costs became tied to actual usage rather than a fixed, over‑provisioned cluster.

Innovative teams in digital‑first companies adopted Snowflake first, using it to power near real‑time product analytics, marketing attribution, and cross‑channel customer views.

Their success stories created the social proof and reference architectures that more conservative enterprises needed before making the switch.

Over time, as more tools in the modern data stack integrated deeply with Snowflake, the “niche” option quietly became the safe, mainstream choice.

The Market Context Snowflake Entered and Why Timing Mattered

Snowflake benefited from launching into a market primed for change:

- Cloud infrastructure had matured. AWS, GCP, and Azure were stable enough for core workloads, and CIOs were actively pushing “cloud‑first” strategies.

- Data volumes and variety were exploding. Clickstream, mobile events, SaaS app data, and IoT streams were overwhelming traditional warehouses.

- Analytics expectations had shifted. Business teams wanted self‑service dashboards, experimentation, and near real‑time reporting, not just monthly static reports.

- Engineering resources were constrained. Data teams needed platforms that abstracted infrastructure details so they could focus on modeling and governance.

Snowflake’s core ideas-separation of storage and compute, near‑infinite concurrency, and pay‑as‑you‑go pricing-directly addressed these trends at exactly the moment when tolerance for legacy constraints was collapsing.

In other words, if Snowflake had launched five years earlier, the cloud story might have been too immature. Five years later, the market might have already consolidated around other paradigms. Timing amplified its product‑market fit.

Why Understanding Snowflake’s Success Matters for Your Data Strategy

For data leaders, Snowflake’s rise is more than an interesting case study. It’s a reminder that:

- Platform choices are only as good as their alignment with where your organization-and the broader ecosystem-is heading over the next 5–10 years.

- Architectural principles (elasticity, workload isolation, governance) matter more than individual features that may change quarterly.

- Vendor ecosystems and integrations can be as decisive as the core engine itself.

Summarizing the main reasons behind Snowflake’s rapid adoption:

- Strong alignment with cloud‑first IT strategies

- Clear relief for legacy warehouse pain points (performance, concurrency, maintenance)

- Simple, consumption‑based pricing that finance teams could understand

- Rapid ecosystem growth and tooling support in the modern data stack

- Familiar SQL interface, lowering adoption and migration friction

Understanding these dynamics helps you evaluate not just Snowflake, but any core data platform you bring into your stack.

It also surfaces a second, equally important question: once you’ve chosen Snowflake, how do you operationalize governed, reusable data models and data marts on top of it without recreating old silos in a new environment?

This is where solutions like OWOX Data Marts can help by automating and standardizing analytics‑ready layers in Snowflake, while keeping modeling transparent and maintainable for your team.

The Cloud-Native Architecture Changed Expectations for Scalability and Performance

Snowflake’s popularity is tightly linked to one core idea: treat the data warehouse as a truly cloud‑native system, not a traditional database lifted into someone else’s data center. This architectural reset is what allowed Snowflake to scale elastically, support many concurrent users, and still remain simple enough for analytics teams to manage.

Instead of forcing all workloads through a single cluster, Snowflake separates responsibilities: one layer stores and manages data, another handles query processing, and a third coordinates everything. For data teams, the result is fewer trade‑offs between performance, cost, and simplicity.

At a high level, this architecture solved three chronic problems of legacy warehouses:

- Scaling storage and compute independently

- Managing contention between different workloads and teams

- Maintaining predictable performance as adoption grows

Understanding how these pieces fit together is key if you’re planning your long‑term analytics architecture on Snowflake or evaluating alternatives.

Separation of Storage and Compute and Why It Was a Breakthrough

In traditional on‑prem and early cloud warehouses, storage (how much data you can keep) and compute (how fast you can process it) are tightly coupled. You scale them together by buying a bigger box or a larger cluster. That leads to familiar pain:

- You overpay for compute just to get enough storage, or vice versa.

- Scaling for a short‑term spike requires permanent infrastructure upgrades.

- Maintenance windows and capacity planning become constant headaches.

Snowflake broke this coupling. Data is stored once in cloud object storage (e.g., S3, GCS, Azure Blob), while compute resources are provisioned separately as virtual warehouses.

Practically, this means:

- You can store petabytes of data cheaply, without locking yourself into oversized compute.

- You can scale compute up or down independently of your data size.

- You pay for compute only when it’s running; storage is billed separately at a low, predictable rate.

For data leaders, this translates into more precise control over both performance and cost. You no longer have to choose between:

- Keeping everything in a single giant cluster to avoid complexity, or

- Splitting workloads across many underutilized clusters that are expensive to maintain.

Instead, you centralize the data and flexibly right‑size compute per workload.

Key benefits of storage–compute separation:

- Independent scaling of data volume and processing power

- More efficient use of compute, especially for bursty workloads

- Simpler capacity planning and fewer architectural “forks”

- Easier central governance over a single source of truth

Elastic Virtual Warehouses and Solving the Concurrency Problem

The second major innovation is Snowflake’s concept of elastic virtual warehouses. A virtual warehouse is essentially a compute cluster dedicated to executing queries. Crucially, multiple warehouses can operate on the same underlying data at the same time.

In older systems, all users and jobs share a single pool of compute. When ETL jobs, BI dashboards, and data science experiments run concurrently, they compete for resources. The result: slow dashboards, timeouts during peak hours, and frustrated stakeholders.

Snowflake addresses this by letting you create multiple, independent warehouses:

- A small warehouse for ad‑hoc analyst queries

- A medium or large warehouse for nightly batch transformations

- A separate warehouse for BI dashboards, tuned for predictable latency

- Additional warehouses for data science, QA, or external partners

Each warehouse has its own compute resources and can be scaled up (more power) or out (more clusters) without affecting others. You can even configure auto‑suspend and auto‑resume so that warehouses only run-and only cost you money-when they’re actually used.

This architecture effectively solves the concurrency problem:

- One team running a heavy backfill won’t degrade the performance of executive dashboards.

- Multiple BI tools and SQL editors can query the same data in parallel.

- High‑value workloads can be isolated and guaranteed the resources they need.

From a governance perspective, this also opens the door to better cost allocation. Different teams or departments can be mapped to their own warehouses, giving finance and data leaders clearer visibility into who is consuming which resources.

How elastic virtual warehouses change the game:

- Workload isolation without data duplication

- Fine‑grained control over performance and SLAs per team or use case

- Automatic pausing to avoid paying for idle capacity

- Scalability for both scheduled and ad‑hoc workloads

Performance, Simplicity, and the Appeal for Both Engineers and Data Analysts

What makes Snowflake’s architecture especially compelling is that it improves both technical and non‑technical user experience.

For engineers and data platform teams:

- Less infrastructure to babysit. No manual index tuning, sharding, or complex cluster management.

- Predictable performance. If a workload is slow, you can scale its warehouse up or out without rewriting everything.

- Cleaner multi‑tenant designs. You can enforce resource boundaries per team or product without duplicating data.

For analysts and business users:

- Fewer slowdowns during peak hours. Dashboards and queries are less likely to be impacted by heavy ETL or backfills.

- Consistent access to the same data. Everyone queries a unified store, reducing discrepancies between reports.

- Faster iteration. Analysts can experiment, prototype models, or run complex queries without waiting for “off‑peak” windows.

From a stack design standpoint, this architecture provides a solid foundation for governed, self‑service analytics.

You can centralize raw and modeled data in Snowflake, then layer tools on top to handle transformation, semantic modeling, and data marts.

Where many teams struggle is not with Snowflake’s core architecture, but with turning that flexible, shared warehouse into a well‑governed set of reusable data marts for marketing, product, finance, and operations.

That’s where solutions like OWOX Data Marts can help automate and standardize the analytics layer on top of Snowflake, so teams get reliable, business‑ready tables without re‑engineering the warehouse every time a new use case appears:

Architecture benefits at a glance:

- True separation of storage and compute for flexible scaling

- Elastic virtual warehouses for workload isolation and high concurrency

- Improved cost control with pay‑per‑use compute and auto‑suspend

- Predictable performance across varied workloads and teams

- Simpler operations compared to traditional warehouse management

These design choices didn’t just make Snowflake faster-they reset expectations about what “good” looks like in a cloud data warehouse, and set the baseline for modern analytics platforms that sit on top of it.

Pricing, UX, and the Business Model Behind Wide Adoption

Snowflake’s technical architecture made it attractive for engineers, but its pricing model and user experience made it palatable for finance and business stakeholders.

That combination is a big part of why it spread so quickly beyond early adopters to become a standard analytics platform across industries.

Instead of large upfront licenses or fixed cluster commitments, Snowflake’s model is straightforward: you pay for compute when it runs and for storage based on how much data you keep.

On top of that, the product is designed so analysts can be productive with familiar SQL and a clean UI, without needing deep database internals.

For data leaders, this meant they could:

- Start with a contained use case and prove value quickly

- Scale to cross‑department analytics without constantly renegotiating licenses

- Maintain a single platform that serves engineers, analysts, and business users

Consumption-Based Pricing and Aligning Cost with Value

Snowflake’s consumption‑based pricing is built around “credits” for compute and separate, low‑cost storage charges. You’re billed for:

- Compute usage: Credits consumed when virtual warehouses or specific services run

- Storage: Compressed data stored in Snowflake‑managed cloud storage

- Optional features: Such as advanced security or replication, depending on the edition

The critical point is that computing the expensive part only accrues cost while warehouses are active. Features like auto‑suspend and auto‑resume help ensure you’re not paying for idle capacity.

This model appealed to both data and finance teams because:

- Costs scale with usage, making pilots and phased rollouts less risky

- It’s easier to tie spend back to specific workloads, teams, or projects

- Over‑provisioning is no longer a structural requirement “just in case”

However, aligning cost with value doesn’t happen automatically. Organizations that benefit most from Snowflake’s model:

- Define clear policies for warehouse sizing and auto‑suspend

- Monitor credit consumption by team or environment

- Treat cost as an operational metric alongside performance and reliability

When you combine this with good governance and modeling practices-e.g., well‑designed data marts rather than ad‑hoc queries on raw data-it becomes much easier to demonstrate ROI to stakeholders.

Lower Friction to Start Small Then Scale Across Teams

Snowflake’s packaging and onboarding are deliberately designed to reduce initial friction:

- Easy trial and POCs. Teams can spin up an environment quickly, load a subset of data, and test real workloads without committing to large licenses.

- Straightforward editions. Different account editions (Standard, Enterprise, etc.) map to security and feature needs, not arbitrary user counts.

- No hardware planning. There’s no capacity procurement phase; you select warehouse sizes and adjust as you observe actual workloads.

This naturally supports an incremental adoption pattern:

- Single-team or single-domain pilot (e.g., marketing analytics, product usage, or finance reporting).

- Expansion to adjacent data domains, leveraging the same centralized warehouse.

- Organization‑wide adoption, with multiple teams running their own warehouses against shared models and data marts.

Because each team can operate on the same data with isolated compute, you don’t have to create parallel stacks for every department. That reduces integration work and helps avoid data silos re‑emerging in a new form.

Key pricing and business model advantages:

- Low upfront commitment, easy to pilot, and prove value

- Pay‑for‑use compute tied to real workloads

- Storage is priced predictably and independently of compute

- Clear paths from small‑team adoption to enterprise‑wide rollout

- Cost visibility and attribution via separate warehouses and projects

Product Experience That Favors Analysts Not Just Data Engineers

Beyond pricing, Snowflake made intentional UX choices to make the platform accessible to analysts and power users, not just data engineers:

- Browser‑based UI with SQL editor. Analysts can connect, run queries, and manage objects without installing heavy clients.

- Familiar SQL surface. By emphasizing ANSI‑like SQL and hiding many storage details, Snowflake lowered the learning curve for people coming from other databases.

- Integrated role and object management. Permissions can be handled in the UI or via SQL, letting data teams balance governance with agility.

- Strong ecosystem integration. BI tools, ELT platforms, and notebooks commonly include native Snowflake connectors, making it easy for non‑engineers to work with Snowflake data directly.

The result is a user experience where:

- Engineers can automate ingestion, transformations, and governance using APIs, code, and CI/CD.

- Analysts can self‑serve much of their own work, exploratory analysis, report building, and basic modeling without waiting in engineering queues.

- Business users benefit from faster iteration cycles and more responsive reporting because bottlenecks shift away from infrastructure and toward data modeling quality.

This is also where the limitations start to show for many organizations: Snowflake is excellent at being a warehouse, not an end‑to‑end analytics product.

Teams still need clear semantic layers, data marts, and governed metrics definitions that analysts and BI tools can rely on.

Solutions like OWOX Data Marts are designed to fill that gap-automating the creation of analytics‑ready tables and business‑friendly schemas on top of Snowflake, while keeping all logic transparent and version‑controlled. That way, you get the UX and speed business users expect, grounded in a robust, Snowflake‑native data model:

By combining Snowflake’s consumption model and UX with a well‑designed analytics layer, organizations can scale adoption across teams without losing control over costs, definitions, or data quality.

Ecosystem Effects: How the Modern Data Stack Amplified Snowflake’s Growth

Snowflake didn’t grow into the most popular cloud data warehouse by features alone. Its rise coincided with, and was accelerated by, the emergence of the “modern data stack”: ELT ingestion tools, transformation frameworks like dbt, cloud‑native BI, reverse ETL, and data sharing platforms.

Because Snowflake offered elastic compute, cheap storage, and a clean SQL interface, it quickly became the default “center of gravity” that other tools integrated with first. As more vendors optimized for Snowflake, it became progressively easier for enterprises to adopt it-and harder to justify alternative platforms that lacked the same ecosystem depth.

In practical terms, Snowflake moved from “one option among many” to the reference architecture for modern analytics. Tooling, skills, best practices, and community content all started from the assumption that Snowflake (or a Snowflake‑like warehouse) would be in the middle.

The Rise of ELT, dbt, and BI Tools on Top of Cloud Warehouses

The move from ETL (transform before loading) to ELT (load first, transform in the warehouse) was a turning point. Tools like Fivetran, Airbyte, Stitch, and others leaned into Snowflake’s ability to handle raw, semi‑structured, and high‑volume data cheaply and reliably.

This changed how data teams architected pipelines:

- Raw data first: SaaS, product, and marketing data land in Snowflake with minimal pre‑processing.

- Transform later in SQL: Business logic and modeling are implemented as SQL transformations, often orchestrated by dbt or similar frameworks.

- Version‑controlled models: Transformations are treated like software, versioned in Git, tested, and deployed via CI/CD.

dbt, in particular, became a natural complement to Snowflake:

- Its SQL‑ and YAML‑based workflows map closely to how teams want to work in a warehouse.

- Incremental models and materializations leverage Snowflake’s compute model effectively.

- Lineage, documentation, and tests help tame sprawling transformation logic.

On the analytics and BI side, vendors like Looker, Tableau, and many others built deep Snowflake connectors and optimizations:

- Direct query pushdown into Snowflake (instead of extracts or cubes)

- Support for Snowflake‑specific features (e.g., query tags, roles, time travel)

- Performance tuning patterns built around Snowflake’s strengths

This combination created a powerful feedback loop:

- Data teams chose Snowflake because “everything works with it out of the box.”

- Tool vendors prioritized Snowflake support because that’s where demand was.

- Best practices, blog posts, and conference talks solidified around Snowflake‑centric workflows.

Ecosystem components that reinforced Snowflake’s position:

- ELT ingestion platforms with native Snowflake targets

- dbt and other SQL‑based transformation orchestration tools

- BI platforms with optimized, pushdown‑friendly connectors

- Reverse ETL tools syncing Snowflake data back into SaaS apps

- Monitoring and cost‑management tools tailored to Snowflake’s model

Partner Ecosystem, Marketplaces, and Data Sharing as Growth Engines

Beyond tooling, Snowflake invested heavily in partnerships and its own data ecosystem.

The Snowflake Marketplace and data sharing capabilities turned the warehouse into a distribution platform:

- Data providers can offer ready‑to‑query datasets (e.g., demographics, intent data, financials) directly inside Snowflake.

- Customers can access external data without managing file transfers, ingestion scripts, or storage overhead.

- Secure data sharing allows organizations to collaborate across business units, subsidiaries, or partners with near‑zero copy architectures.

This matters because it shifts Snowflake from “a place where we store our data” to “a place where we connect our data with the outside world.” It reduces the friction of:

- Onboarding third‑party datasets

- Collaborating with partners on shared models or benchmarks

- Building data products that require controlled sharing

The partner ecosystem also amplified Snowflake’s credibility:

- Global SI and consulting partners building Snowflake‑centric offerings

- Industry‑specific solutions (e.g., for retail, fintech, healthcare) based on Snowflake

- Joint GTM motions with cloud providers, analytics vendors, and ISVs

Collectively, this ecosystem made Snowflake a platform, not just a product. It encouraged enterprises to treat Snowflake as the default hub for both internal analytics and external data collaboration.

Why Snowflake Became the Safe, Standard Bet for Enterprises

For large organizations, choosing a data platform is as much about risk management as it is about features. Snowflake’s ecosystem strength reinforced a sense that it was a “safe” long‑term bet:

- Governance and security. Enterprise‑grade features (role‑based access, data masking, compliance certifications, multi‑region replication) aligned with regulatory and security requirements.

- Scalability and availability. Proven ability to handle massive workloads across industries, with SLAs and reference architectures for high availability and DR.

- Vendor viability. Strong financials, public company status, and visible roadmap reassured CIOs and CFOs that Snowflake would be around for the long haul.

- Talent pool and skills. A growing workforce of engineers and analysts with Snowflake experience lowered adoption and hiring risks.

- Ecosystem optionality. The breadth of compatible tools meant enterprises were not locked into a single‑vendor stack for ingestion, BI, or activation.

From a strategy perspective, Snowflake checked multiple boxes:

- It integrated cleanly with existing cloud environments.

- It didn’t force a specific BI or transformation tool.

- It offered clear paths for data governance and multi‑region deployments.

- It enabled cross‑organizational collaboration and data products via sharing and marketplace capabilities.

However, while the ecosystem makes it easier to get data into Snowflake and out to tools, there is still a gap between “central warehouse” and “business‑ready analytics layer.” Many enterprises struggle with:

- Fragmented metrics and definitions across teams and tools

- Overlapping or inconsistent data marts built independently by different groups

- Rising warehouse costs from poorly governed queries and transformations

That’s where focused solutions like OWOX Data Marts add value-sitting between raw data and BI tools, automating the creation of governed, analytics‑ready models on Snowflake while keeping logic transparent and reproducible:

In other words, Snowflake’s ecosystem made it the standard hub. The next competitive edge comes from how effectively you structure, govern, and operationalize the analytics layer that lives on top of it.

What Snowflake’s Success Reveals About the Future of Analytics and Data Governance

Snowflake proved that a cloud‑native warehouse can deliver scale, performance, and usability at the same time. But its success also exposed a new bottleneck: the hardest problems in analytics are no longer storage or compute - they’re governance, semantics, and how people actually consume insights.

Modern data stacks now start from the assumption that a platform like Snowflake will handle the raw data. The real differentiation comes from what you build on top: reusable metrics, governed data marts, semantic layers, and increasingly, AI‑assisted analysis that amplifies decision‑making.

Snowflake’s trajectory hints at where analytics is heading: centralized, trusted data foundations with decentralized, intelligent consumption across teams.

From Raw Tables to Governed Metrics and Reusable Data Marts

Snowflake makes it easy to land vast amounts of raw data. Without a structured approach on top of that, though, organizations end up with:

- Dozens of slightly different definitions of “revenue” or “active user”

- Complex, duplicated SQL logic scattered across BI tools and notebooks

- Analysts are rebuilding similar models in parallel, wasting both time and credits

The future of analytics on platforms like Snowflake depends on a strong semantic layer: a consistent, centrally governed representation of business concepts that everyone can reuse.

Concretely, that means:

- Curated data marts for domains like marketing, product, finance, and sales, built from shared, tested transformations.

- Standardized metrics (e.g., LTV, churn, ROAS, CAC) are defined once and reused across dashboards, experiments, and reports.

- Documented lineage that shows how a KPI ties back to source systems and logic.

Reusable data marts act as the contract between raw data and business consumption. They allow:

- BI tools and notebooks to query the same, trusted tables

- New reports to be created quickly without redefining logic

- Governance teams to audit and control change centrally

This is exactly the gap solutions like OWOX Data Marts are built to fill – automating the construction of analytics‑ready models on Snowflake, making metric definitions explicit, version‑controlled, and shareable across tools.

Enabling True Self-Service Without Losing Control and Governance

One of the lessons from Snowflake’s rise is that technical scalability is only half the story. As more teams gain access to the warehouse, data leaders must reconcile two opposing forces:

- Self‑service: Give analysts and business users the freedom to explore, slice, and combine data to answer their own questions.

- Governance: Maintain control over definitions, access, privacy, and costs.

The future of analytics governance is less about locking things down and more about enabling safe autonomy. That typically involves:

- Role‑based access and data contracts. Clear boundaries around who can access raw vs. modeled data, and what guarantees each layer provides.

- Tiered data models. Raw, intermediate, and curated layers, with explicit rules for which are suitable for self‑service.

- Change management. Versioning, testing, and approvals for changes to core metrics and data marts, so dashboards don’t silently break.

- Cost and performance guardrails. Quotas, warehouse sizing policies, and monitoring to prevent runaway queries or inefficient patterns.

In such a framework, self‑service doesn’t mean everyone queries everything. It means:

- Most questions are answered from governed, curated data marts.

- Power users can reach deeper layers with clear guidelines and tooling support.

- Governance teams maintain visibility into who is using what, and how it impacts data quality and spend.

Snowflake’s architecture (isolation via warehouses, fine‑grained roles, centralized storage) makes this approach feasible. The challenge - and opportunity - is to implement governance as a product, not just a set of policies.

The Role of AI-Assisted Analysis and Proactive Insights on Trusted Data

With a stable, governed data foundation in place, the next frontier is how organizations extract insights from it. AI and ML are moving from standalone data science projects into everyday analytics workflows:

- Natural language querying allows business users to ask questions in plain language, lowering the barrier to entry.

- Automated anomaly detection surfaces unexpected trends or issues before they appear in dashboards.

- Augmented analytics suggests segments, correlations, or next‑best actions based on historical patterns.

- AI copilots for analysts help write queries, document models, and validate assumptions.

The catch is that AI is only as good as the underlying data and semantics. Models trained on inconsistent metrics or poorly governed tables will amplify noise, not insight. That’s why Snowflake’s success underscores a key principle for AI‑driven analytics:

Reliable AI‑assisted insights require a well‑governed, semantically consistent data layer on top of a scalable warehouse.

This is where the combination of:

- A cloud warehouse like Snowflake

- A governed semantic and mart layer (e.g., via OWOX Data Marts)

- AI‑powered interfaces and assistants

becomes particularly powerful. Data teams define and enforce the logic once; AI systems help scale access to that logic across the organization in a controlled, explainable way.

Future directions for analytics inspired by Snowflake’s model:

- Centralized storage and compute, decentralized but governed consumption

- Strong semantic layers and reusable data marts as the core of BI strategy

- Governance frameworks that prioritize safe self‑service, not just restriction

- AI‑assisted analysis built on top of trusted, well‑documented data

- Ecosystem‑driven stacks where tools interoperate around a warehouse‑centric hub

Snowflake solved the infrastructure problem so effectively that it shifted the analytics conversation. The next wave of differentiation will come from how organizations design their semantic layers, governance models, and AI‑driven experiences on top of platforms like Snowflake.

Turning Snowflake Into a Trusted, Self-Service Analytics Layer with OWOX

Snowflake gives you the scale, performance, and ecosystem to centralize data. But turning that raw capability into consistent metrics, trusted dashboards, and proactive insights across the business is still a major engineering and governance challenge.

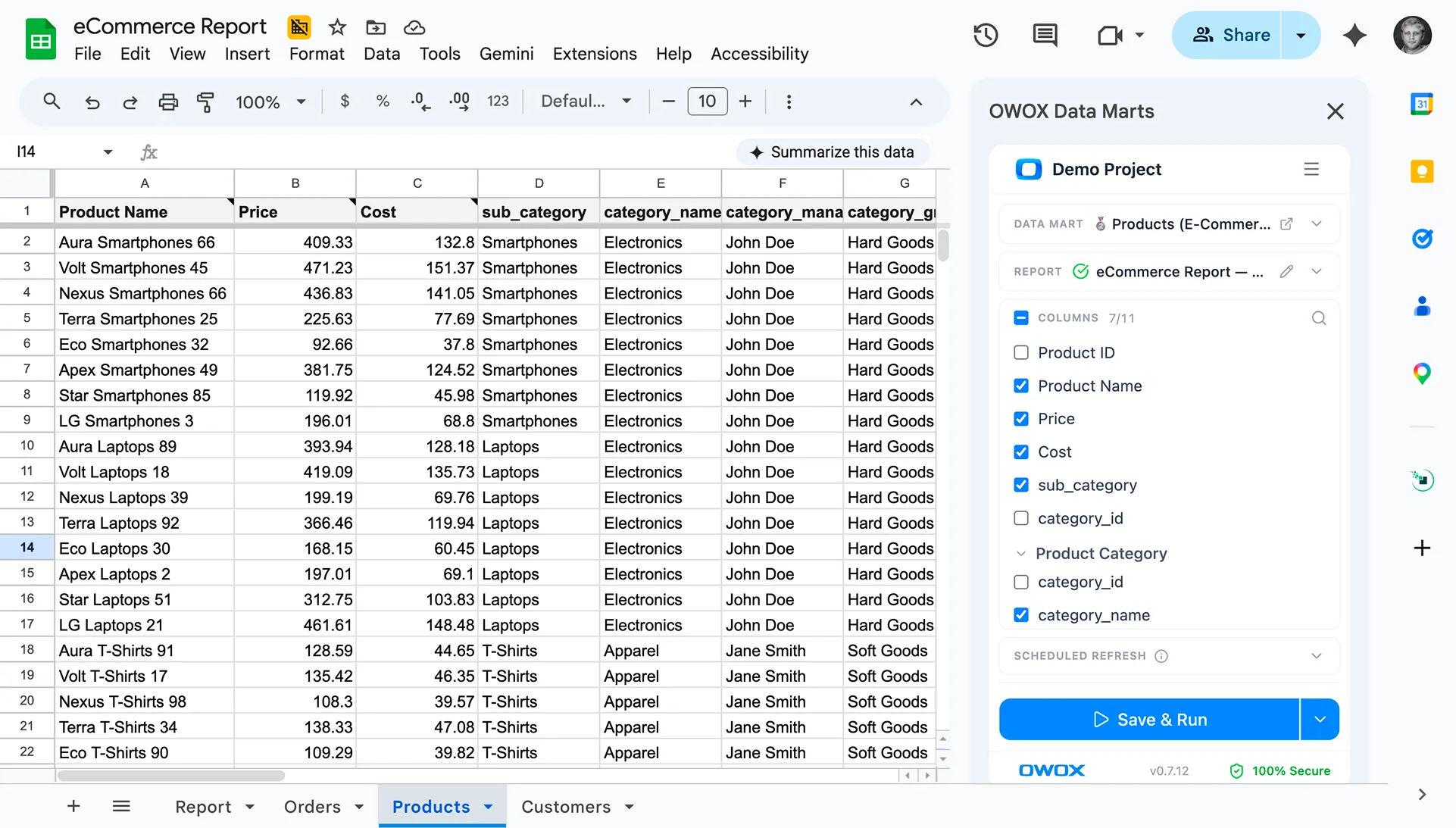

OWOX Data Marts is designed to sit directly on top of Snowflake and close this gap. It helps data teams define business logic once, automatically build and maintain analytics‑ready marts, and deliver governed insights into the tools where business users already work - while keeping all transformations transparent and under your control.

Instead of every team writing its own SQL or duplicating logic across BI tools, OWOX provides a structured way to operationalize Snowflake as a true, self‑service analytics layer.

Using OWOX Data Marts to Define and Reuse Metrics Across Teams

With OWOX Data Marts, you treat business logic and metrics as first‑class assets, not ad‑hoc queries:

- Centralized metric definitions. KPIs like revenue, ROAS, LTV, churn, or active users are defined once and compiled into Snowflake models and marts.

- Reusable data marts. Domain‑specific marts (marketing, product, sales, finance) are generated and kept in sync from shared transformation logic.

- Version control and lineage. Every change to a metric or transformation is tracked, so you know what changed, when, and why.

- Consistency across tools. The same Snowflake tables back dashboards, ad‑hoc analysis, experiments, and AI‑driven insights.

This approach turns Snowflake into a governed semantic layer:

- Analysts don’t have to re‑implement complex joins or filters every time they build a report.

- Different departments align on a shared, auditable definition of core metrics.

- Data leaders gain a single place to review, approve, and roll out changes to business logic.

Behind the scenes, OWOX compiles these definitions into efficient SQL for Snowflake and manages the refresh logic, so teams can focus on what metrics mean – not how to materialize them.

Start defining and reusing metrics across Snowflake with OWOX Data Marts

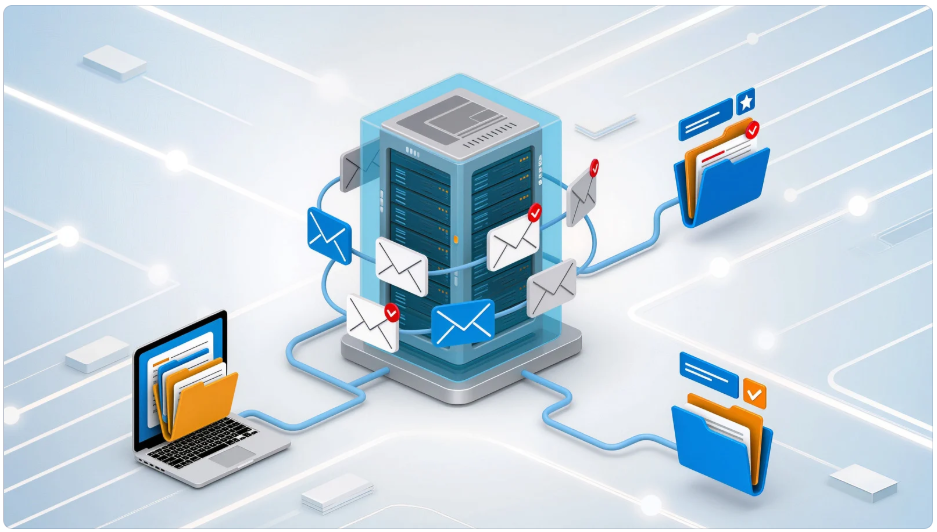

Delivering Insights Where Business Users Already Work

Trusted data is only valuable if it reaches decision‑makers in their daily workflows. OWOX Data Marts makes Snowflake‑backed insights accessible in the channels your teams actually use:

- Slack and Microsoft Teams. Schedule or trigger alerts, KPI digests, and anomaly notifications directly into channels or DMs.

- Email digests. Send periodic summaries of key metrics to stakeholders who live in their inboxes.

- Google Sheets and spreadsheets. Let analysts and managers pull governed Snowflake data into sheets for lightweight modeling or planning - without bypassing central logic.

- BI tools and dashboards. Feed curated data marts into your existing BI stack via native Snowflake connections.

Because all of this is powered by the same governed marts on Snowflake:

- The numbers in Slack match the numbers in dashboards and Board decks.

- Stakeholders can drill from high‑level KPIs to underlying segments without switching platforms.

- Data teams can roll out new metrics once and see them propagate across channels automatically.

This reduces the temptation to build one‑off extracts or “shadow” datasets for specific teams, helping you keep Snowflake as the single source of truth.

Combining Governed Snowflake Data with Proactive AI Insights While Avoiding Hallucinations

AI can dramatically increase the reach and impact of your data - but only if it’s grounded in trusted, well‑modeled tables and metrics.

OWOX Data Marts combines governed Snowflake data with AI‑assisted insights to help teams move from reactive reporting to proactive decision support, while minimizing the risk of hallucinations or misleading outputs:

- AI on top of curated marts, not raw chaos. Models operate on stable, documented schemas and metrics, reducing ambiguity and error.

- Transparent logic and explanations. When AI surfaces an anomaly or recommendation, it ties back to the underlying metrics and queries, so analysts can validate and reproduce results.

- Guardrails and access control. AI‑driven features respect Snowflake roles, data masking, and governance policies, ensuring sensitive data isn’t exposed.

- Human‑in‑the‑loop workflows. Analysts can review, refine, and approve AI‑generated insights before they are broadcast to wider audiences.

The result is AI that acts as a force multiplier for your data team - spotting issues, suggesting segments, flagging unusual behavior - without inventing its own definitions or bypassing governance.

In practice, OWOX Data Marts helps you:

- Turn Snowflake into a governed semantic and mart layer for the whole business

- Define metrics once and reuse them consistently across teams and tools

- Deliver insights into Slack, Teams, email, Sheets, and BI dashboards

- Leverage AI to surface proactive, explainable insights on trusted data

- Maintain strong governance, lineage, and cost control as adoption scales

If you’re already investing in Snowflake - or planning to - OWOX Data Marts gives you a practical way to turn that investment into a trusted, self‑service analytics layer that the whole organization can rely on.

Explore OWOX Data Marts and start building governed, reusable analytics on top of Snowflake.

Frequently asked questions

Snowflake became the default cloud data warehouse due to its innovative cloud-native architecture that separates storage and compute, elastic virtual warehouses that solve concurrency issues, consumption-based pricing, and strong integration with the modern data ecosystem. These factors combined to provide scalable, cost-efficient, and user-friendly analytics, enabling faster insights with simpler operational management than legacy systems.

Snowflake’s architecture separates storage and compute layers, allowing each to scale independently. This means organizations can store large volumes of data cheaply while scaling compute power flexibly to match workload demands. Additionally, elastic virtual warehouses let multiple compute clusters run concurrently on the same data, eliminating resource contention and ensuring predictable performance even with many users and heavy workloads.

Snowflake uses a consumption-based pricing model where customers pay separately for compute credits when virtual warehouses run and for storage based on data volume. This pay-as-you-go system eliminates high upfront costs and over-provisioning, allowing organizations to align costs directly with actual usage, making budgeting more predictable and reducing financial risk for phased rollouts and scaling.

The modern data stack, including ELT tools like Fivetran, transformation frameworks such as dbt, and BI platforms like Looker and Tableau, deeply integrate with Snowflake’s cloud-native warehouse. This synergy creates a powerful workflow where raw data is ingested cheaply, transformed in-place with SQL, and analyzed through native connectors, making Snowflake the central hub for end-to-end analytics and accelerating its enterprise adoption.

Despite Snowflake’s technical strengths, organizations often struggle with governance, semantic consistency, cost control, and operationalizing reusable data marts. Without a structured semantic layer and standardized metrics, teams risk creating fragmented and inconsistent business definitions, duplicative logic, and spiraling warehouse costs, undermining data trust and scalability.

OWOX Data Marts sits on top of Snowflake to automate the creation and governance of analytics-ready data marts and business metrics. It enables centralized metric definitions, version control, and lineage tracking, ensuring consistent, auditable KPIs across teams. It also delivers insights directly into business workflows and supports AI-assisted analysis, helping organizations scale self-service analytics without sacrificing control or data quality.

AI-assisted analysis on Snowflake enables proactive, scalable insights by leveraging governed, semantically consistent data layers. It allows natural language querying, anomaly detection, and augmented analytics while respecting governance policies to avoid misinformation. This approach transforms reactive reporting into intelligent decision support, amplifying business users’ ability to extract value from trusted data.

Snowflake launched when cloud infrastructure matured, data volumes exploded, and analytics demands shifted towards self-service and real-time insight. Traditional warehouses were ill-equipped for these changes, while Snowflake’s architecture and consumption-based model aligned perfectly with market needs. This perfect timing allowed it to rapidly gain adoption as organizations embraced cloud-first strategies and sought flexible, scalable analytics solutions.

Finally, a tool that doesn't ask business users to learn a new dashboarding UI. Our marketing team already knows Sheets. OWOX just delivers the right data.

Joinable data marts concept was the thing that sold us. We can now use the semantic layer without building one.

Self-hosted the OSS version on Digital Ocean. Zero vendor lock-in. Contributed a Shopify connector back in week two.