What Is Schema-on-Read?

Schema-on-Read is a data processing approach where the schema is applied only when data is read, offering flexibility in handling various data formats.

Schema-on-Read lets you store raw, unstructured, or semi-structured data first and define how to interpret that data only when you query it. This model is ideal for modern analytics where speed, variety, and scalability are more important than upfront structure.

Benefits of Schema-on-Read

Schema-on-Read is ideal for organizations that deal with varied, fast-changing data.

Key benefits include:

- Inexpensive storage: Raw data is stored without upfront formatting, reducing the need for costly preprocessing and saving on storage resources.

- Data freshness: As no transformations occur before storage, data remains in its most current and original form for accurate analysis.

- Agility: Teams can define or modify schemas at query time, making it easier to adapt to new questions or changing data structures.

- Faster data ingestion: Without the need to validate data against a schema before storage, data can be ingested more quickly into the system.

- Supports diverse data types: Easily accommodates structured, semi-structured, and unstructured data such as JSON, logs, and nested formats.

- Reduces upfront planning: No need to finalize the schema in advance, allowing analysts to explore and understand data before deciding on structure.

- Enables flexible analytics: The same dataset can be interpreted in multiple ways for different use cases, without duplicating or restructuring the data.

- Scales with growing data: Works well in modern data lakes and cloud environments where data grows rapidly and structure may not be consistent.

Key Features of Schema-on-Read

Schema-on-Read offers significant flexibility and adaptability, especially in big data environments.

Key features include:

- Flexible schema definition: Apply the schema only when reading the data, enabling diverse exploration without predefined structure.

- Supports multiple data types: Easily handles structured, semi-structured, and unstructured formats like JSON, XML, logs, and text.

- Raw data storage: Keeps original data intact, allowing for full traceability, reprocessing, and deeper analysis as needs evolve.

- Dynamic analysis workflows: Enables analysts to run different queries with varying structures over the same dataset.

- No upfront transformation needed: Speeds up data ingestion and reduces initial processing requirements.

How Schema-on-Read Works

Unlike schema-on-write systems, where the schema must be defined before storing data, Schema-on-Read defers this step until query time. This means raw data is ingested and stored without transformation, and the structure is applied only when it's accessed.

This late-binding approach enables the dynamic interpretation of data, making it ideal for evolving data sources, such as logs, web events, or third-party feeds. It allows analysts to reshape, filter, and analyze the same dataset in multiple ways without duplicating or reloading it.

Schema-on-Read vs. Schema-on-Write

Schema-on-Read and Schema-on-Write represent two core strategies in data processing, each with different implications for how data is stored, queried, and maintained.

Key differences include:

- Schema-on-Write: The schema is enforced before data is written into storage. This approach ensures consistency and optimizes query performance, but it requires a strict structure and upfront planning. It’s commonly used in relational databases and transactional systems.

- Schema-on-Read: The schema is applied only when the data is read. This model supports flexible exploration, faster data ingestion, and easier adaptation to evolving data formats—ideal for big data platforms and analytics workflows.

Challenges of Schema-on-Read

Despite its flexibility, Schema-on-Read presents certain limitations that can impact performance, usability, and governance in large-scale analytics environments.

Key challenges include:

- Performance trade-offs: Query-time processing can slow down performance, especially with high data volumes or complex structures.

- Advanced skill requirements: Analysts must define schemas manually during analysis, often requiring strong SQL or data modeling skills.

- Inconsistent data quality: Raw data isn’t validated during ingestion, which can lead to hidden errors or inconsistencies.

- Higher compute costs: Repeated on-the-fly parsing and transformations may increase processing costs over time.

- Governance risks: Without a predefined structure, managing access controls, data privacy, and compliance becomes more difficult.

Best Practices for Schema-on-Read

To maximize the benefits of Schema-on-Read, teams should strike a balance between flexibility and structure, ensuring that data remains accessible, trustworthy, and efficient to work with.

Key best practices include:

- Use semantic layers: Create reusable views or virtual models that apply structure to raw data without altering the source.

- Document query logic: Maintain clear documentation for how schemas are applied, including business definitions and field transformations.

- Validate during reads: Apply checks within queries to catch missing values, mismatched types, or malformed entries early.

- Leverage SQL functions: Use BigQuery functions like JSON_VALUE or SAFE_CAST to handle semi-structured data cleanly.

- Optimize common queries: Materialize frequently used transformations as summary tables or views to reduce repeated computation.

- Set access controls: Protect raw datasets with permissions and limit who can query sensitive or unstructured data directly.

- Monitor performance: Track query costs and execution times to identify opportunities for optimization or schema tuning.

Real-World Use Cases of Schema-on-Read

Schema-on-Read is ideal for organizations working with diverse, fast-changing, or unstructured data.

Key use cases include:

- Data integration: Easily combines data from multiple sources with varying formats, avoiding upfront transformation.

- Real-time data streaming: Processes evolving data streams—such as logs or events, without requiring a fixed schema.

- Big data analysis: Handles large volumes of semi-structured or unstructured data, common in data lake environments.

- Data exploration: Empowers analysts to query unfamiliar datasets quickly, uncovering insights without predefined models.

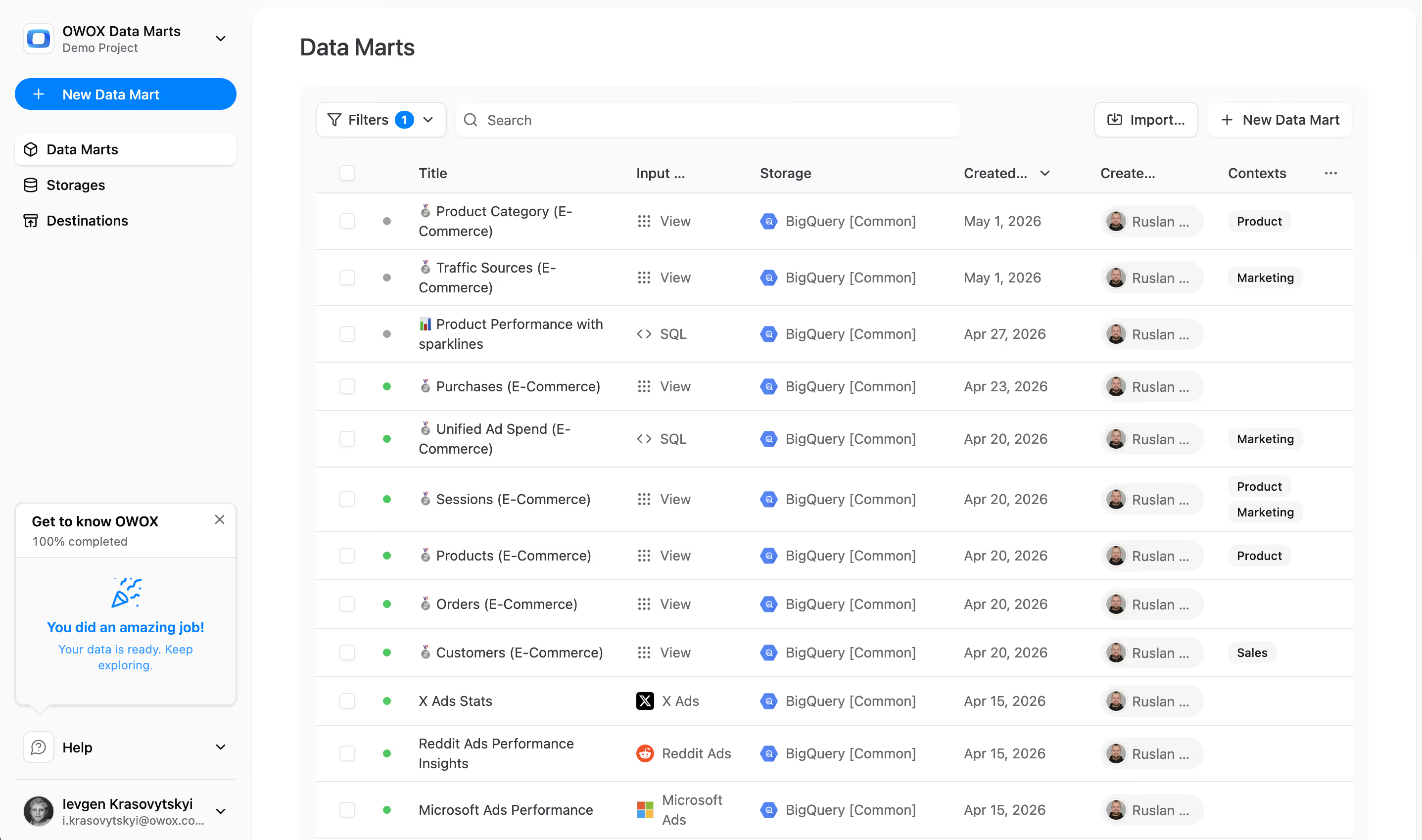

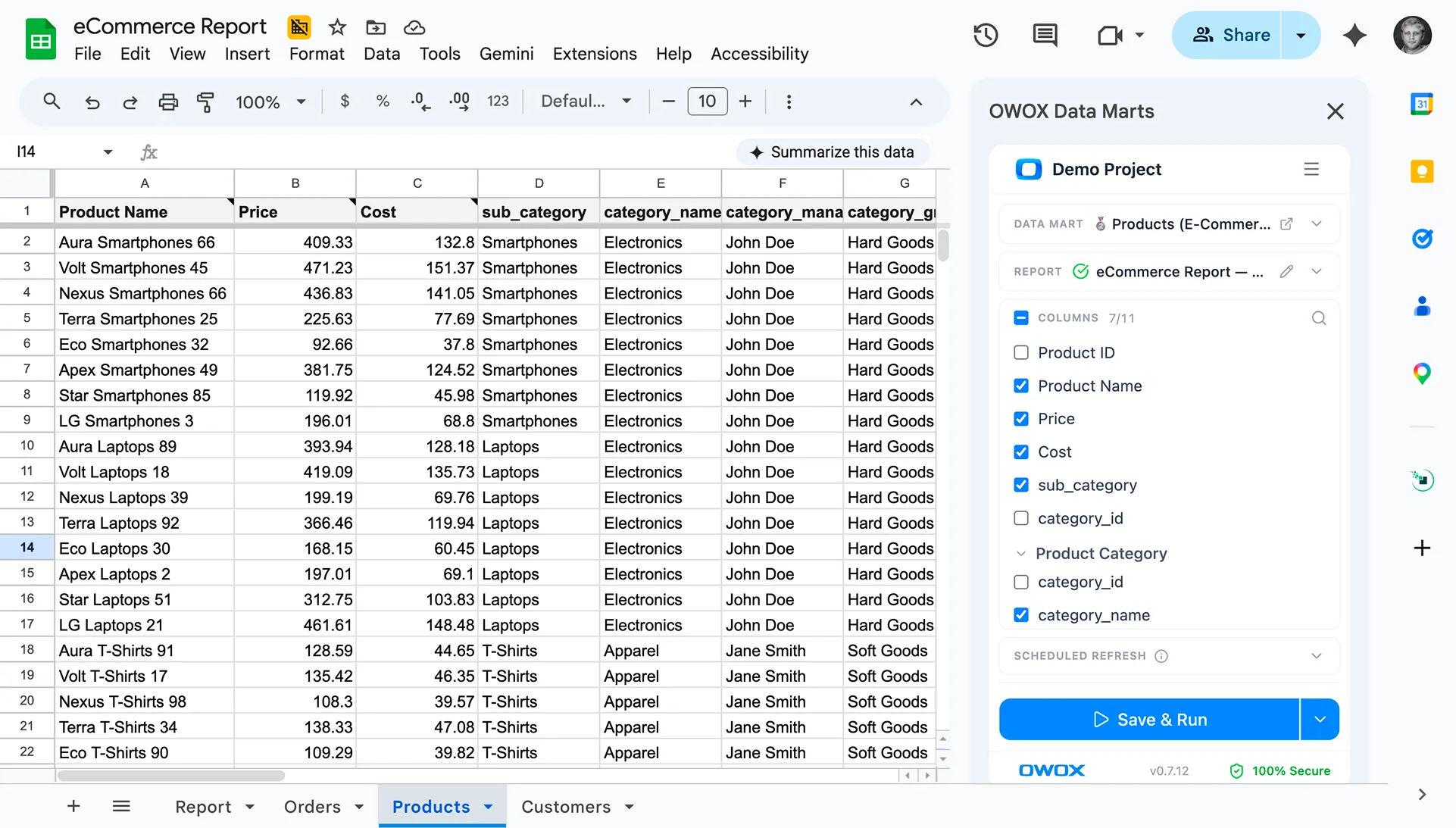

Introducing OWOX BI SQL Copilot: Simplify Your BigQuery Projects

OWOX BI SQL Copilot makes working with raw or semi-structured data in BigQuery easier. It helps you write, explain, and improve SQL queries using AI-powered guidance. Whether you're exploring schema-on-read datasets or building reports, the copilot speeds up analysis, reduces errors, and saves hours of manual work, perfect for analysts who want clarity, not complexity.

Finally, a tool that doesn't ask business users to learn a new dashboarding UI. Our marketing team already knows Sheets. OWOX just delivers the right data.

Joinable data marts concept was the thing that sold us. We can now use the semantic layer without building one.

Self-hosted the OSS version on Digital Ocean. Zero vendor lock-in. Contributed a Shopify connector back in week two.