How to Avoid Data Sampling and Get Complete Data for Advanced Analytics

Master the art of collecting complete data for advanced analytics. Avoid data sampling and cardinality and get comprehensive data analytics

Have you ever wondered how to avoid data sampling and reporting limitations in Google Analytics 4? How can you get complete data for marketing reporting? There are several ways to do so, such as:

- Set up the [GA4] BigQuery Export (raw unsampled user behavior data).

- Use other tools to stream user behavior data from the website to BigQuery.

Each of these solutions has its specialties and limitations.

.png)

In the article, we explain the ways of getting rid of data sampling in detail and describe how to get advanced analytics build-out.

Note: This post was originally published in May 2020 and was completely updated in April 2024 for accuracy and comprehensiveness on the state of marketing analytics, reporting, and privacy.

What is data sampling

Data sampling in Google Analytics 4 refers to the practice of analyzing a subset of data instead of the entire dataset. This approach is used when the amount of data is large, making it challenging to process everything quickly.

By examining representative sample data, Google Analytics 4 can generate reports faster while maintaining a reasonable (at least they think it is…) level of accuracy. The results are extrapolated to predict the total behavior of the website traffic.

However, data sampling can lead to less precise data, especially in cases of complex custom reports where specific trends or anomalies might be missed.

Understanding Data Sampling in Google Analytics 4 (GA4)

Google Analytics 4 data sampling is different from the previous GAU version. GA4 is designed to handle larger datasets more efficiently, reducing the need for sampling in standard reports.

However, sampling in GA4 may still be applied in certain situations, such as when creating ad-hoc reports or when dealing with extremely high volumes of data. GA4's advanced machine learning capabilities also assist in predicting trends and user behaviors, enhancing the insights gained from sampled data. The shift towards more sophisticated data processing in GA4 aims to provide more accurate and comprehensive analytics insights without processing large amounts of data.

Why Using Samples Can Make It Tricky to Make Decisions Right

Data sampling can sometimes obscure crucial insights from your data for several reasons:

- Loss of Detail in Rare Events: Sampling involves analyzing only a subset of the entire dataset. If rare events or anomalies are present in the unsampled data, they might not appear in the sample. This is particularly problematic in scenarios like fraud detection, where rare but significant events can be completely missed in a sample.

- Biased Samples: If the sample is not representative of the entire dataset, it can lead to biased insights. For instance, if a sample overrepresents a particular demographic or user behavior, the conclusions drawn might not accurately reflect the broader population or user base.

- Reduced Precision in Segmentation and Granularity: When detailed segmentation (such as geographic, demographic, or behavioral segmentation) is crucial, sampling can dilute the specificity and accuracy of these segments. This reduction in granularity can lead to oversimplified interpretations of the data.

- Impact on Trend Analysis: In trend analysis, especially with time-series data, sampling can mask subtle but important trends or patterns. This is because the random nature of sampling might exclude data points that are critical for identifying these trends.

- Compromised Custom Reports: In cases where custom reports are needed to answer specific business questions, sampling can limit the ability to drill down into the data. Custom queries often require looking at specific subsets or combinations of data, which might be inadequately represented in a sample.

- Statistical Significance and Confidence Levels: Sampling can affect the statistical significance of the results. Smaller samples might not provide enough data to confidently assert findings, leading to a higher margin of error and less confidence in the results.

In summary, while sampling is a useful tool for managing large datasets and improving processing times, it is essential to be aware of its limitations, especially when dealing with rare events requiring high granularity or drawing conclusions that require a high level of confidence and precision.

Problems and Limitations with Exporting Data to BigQuery

The main limitation of [GA4] BigQuery Export is BigQuery complexity for marketers. It requires SQL knowledge in order to collect events into sessions, merge online data with advertising costs, attribute costs to sessions, and build attribution models.

More of that, the most recent user actions appear in the BigQuery tables in more than 15 minutes. To set such a data refresh rate, you’ll have to pay additionally: by default, the events tables are updated three times a day.

That’s why you’ll have to pay extra for processing requests in BigQuery. The export table from GA4 contains many nested levels and fields that could be blank in 80% of cases. The more nesting levels are in the table, the more expensive the queries are. If you have many reports to update hourly or daily, data processing will cause most of your GBQ costs.

Most of the other services that collect data from the website interactions to GBQ in their own structure may also have the same problem.

As export from GA4 to Google BigQuery is a representation of data collected into Google Analytics in real time, it doesn’t provide a retrospective update of records. For example, a purchase return will be typically recorded as a separate session that won’t be associated with a payment session. So, the correct revenue from this order must be recalculated manually.

None of the mentioned solutions can transfer your ad campaign costs at the session level into Google BigQuery. It means you won’t know how much you’ve spent attracting a specific segment of users. You cannot calculate ROI and DRR for different cohorts, product groups, and landing pages. Unless you use another tools to load the advertising costs into BigQuery and apply SQL to blend them.

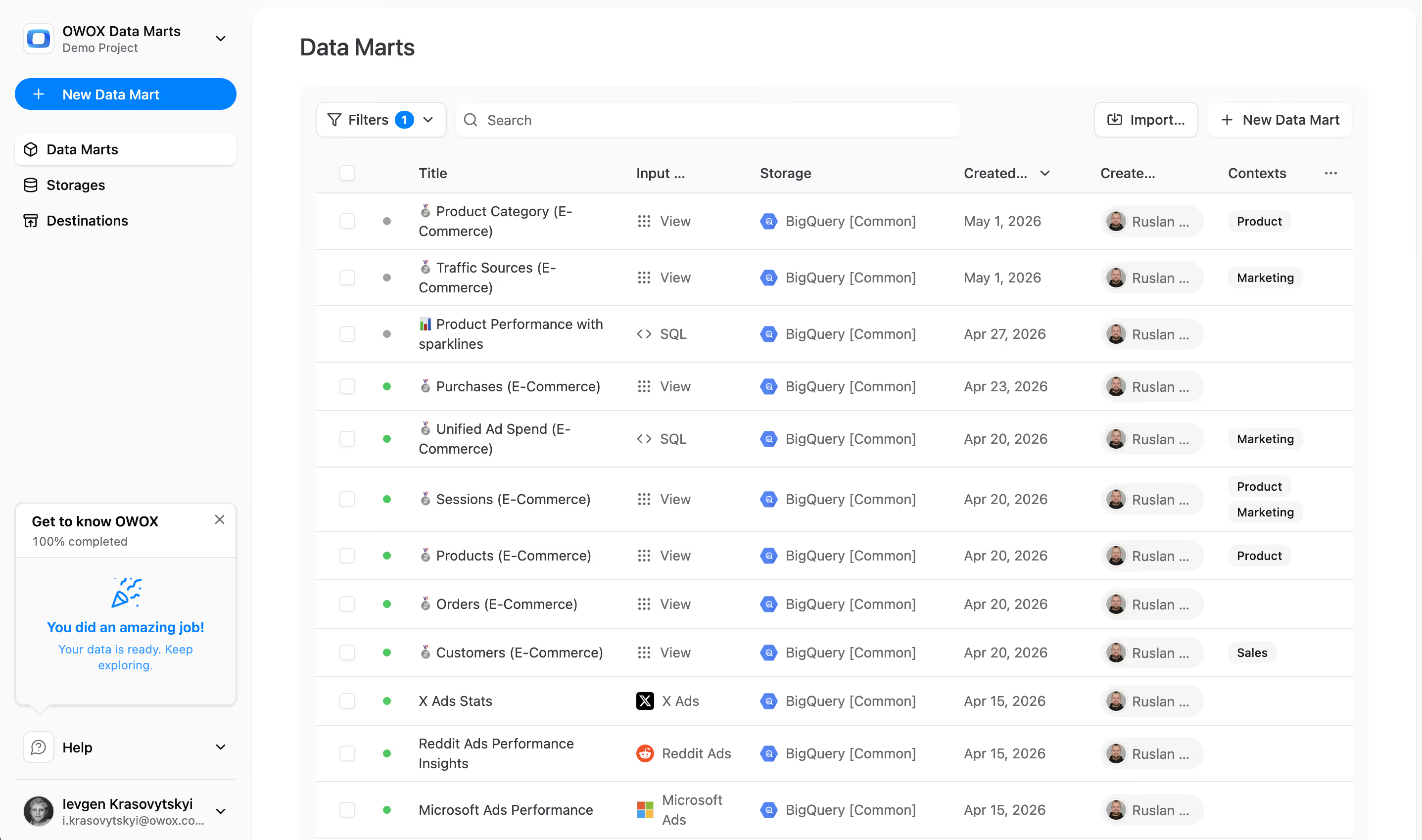

Knowing these limitations, we at OWOX decided to improve the raw data processing experience for any kind of business. We conducted hundreds of product interviews and received the following requests:

- Website data should be completely collected into Google BigQuery in a non-aggregated form.

- User behavior data must be delivered to Google BigQuery in real-time.

- Session tables in Google BigQuery should contain advertising cost data.

- The table structure must be compact and as full as possible to use data queries optimally.

- Data must be synchronized by key parameters with the historical periods.

- There should be a possibility to compare data in BigQuery with GA4 reports if significant discrepancies appear.

We’ve considered all these requests and developed our own real-time cookieless website data streaming into Google BigQuery.

Key Strategies to Overcome Data Sampling with OWOX BI

Let’s take a closer look at how OWOX BI bypasses restrictions of Google Analytics 4 and standard GA4 BigQuery export:

1. Unlimited amount of collected data

The idea of sampling is to build reports on samples of the data, not the whole volume of available data. If the sample is too small, you can’t trust a report built on such a sample. With OWOX BI, you can collect complete, unsampled data to create more accurate reports and make data-informed decisions.

How it worked in Google Analytics Universal

The Google Analytics Universal interface applies a hit limit to data processing and reporting. Thus, you get either data sampling or aggregated tables (row ‘other’ in reports). Besides, if you want to use a segment or create a manual report, it is mostly displayed in a sampled form, showcasing the challenges of dealing with GA sample data.

How it works in Google Analytics 4

Unlike its predecessors, Google Analytics 4 does not impose restrictions on the number of events (ex hits - a.k.a user interactions) at the time of data collection. However, Google Analytics 4 might still apply data sampling in certain scenarios, particularly in complex or large-scale custom reports.

How it works in OWOX BI

Each user action on the website (page view, click on the button, banner view, or placing order) is streamed to BigQuery by a separate message — event. You should consider that there are no restrictions on the number of events at the moment of data collection.

OWOX BI copies the event content when it departs from your website and then sends it to your project in Google BigQuery. Thus, you get raw, unsampled data with unlimited collected and processed events.

Use case

Let’s say you are forced to collect promo page traffic, advertising partner's data, or landing page statistics for each brand/manufacturer into separate Google Analytics 4 properties to collect more valuable data. Anyway, to meet the GA4 limitations, you have to build reports without being able to asses the traffic that is valuable to you.

You can collect this data in a single table in Google BigQuery with OWOX BI Streaming. It provides you with a basis for strategic decisions and a comprehensive vision of the website performance and advertising campaign effectiveness.

Find out other problems you can meet while building reports in Google Analytics 4 and how to solve them by building reports data in BigQuery:

2. Real-time data collection

With OWOX BI, you can quickly send a triggered email or detect an anomaly in collected website data in Google BigQuery because user behavioural data appears in your project within just 1 minute.

How it worked in Google Analytics Universal

All daily collected data is reflected in GA only after 48 hours (in GA 360 — after 4 hours). Real-time reports display a limited number of parameters and metrics and don’t allow you to personalize user actions.

The standard export from GA 360 to GBQ was able to operate in two modes:

- Upload data once every 8 hours roughly for free (you pay for a GA 360 license and data storage in GBQ).

- Upload data every 15 minutes (you have to pay extra for every 1 Tb processed).

How it works in Google Analytics 4

Google Analytics 4 reflects daily collected data typically within 24 hours, with some real-time reporting capabilities. However, these real-time reports are limited in scope and detail.

How it works in OWOX BI

OWOX BI sends data immediately from the website to your GBQ project. You receive complete non-aggregated user behavioral data within 1 minute after the event occurs.

Use case

By monitoring user activity on the website in real-time, you can stimulate sales with trigger email campaigns and personal offers. For example, when a user added an item to a shopping cart but didn’t buy anything or didn’t sign the deposit on the website.

It can also help you to detect and correct problems on the website in time: you can get notifications from triggers on website code errors and traffic or conversions decrease.

Learn how Pigu managed to increase by 15% the number of sessions on Black Friday.

3. Increased allowed hit / event size

With OWOX BI, you get a broad picture of user activity on your website, even if they make orders consisting of 15+ items or browse large lists of items.

How it worked in Google Analytics Universal

There was a limit in GA on the size of the transferred hit — 8 KB. For example, if a transaction message exceeded this limit, it wasn’t tracked in GA.

How it works in Google Analytics 4

In Google Analytics 4, there are still restrictions on the size of individual events data. These limitations are in place to ensure efficient data processing and storage. It's important for users to be aware of these limits to ensure that all relevant data is captured and reported.

How it works in OWOX BI

In OWOX BI, the maximum size of the event transferred to Google BigQuery is increased to 16 KB.

Use cases for E-commerce

A hit can exceed 8 KB containing information about a product list when you’re tracking user’s browsing of catalog pages with Enhanced Ecommerce. It happens if:

- There are more than 10 items are sent in one event;

- Each item has an additional parameter.

In this case, a packed hit won’t get into GAU/GA4. However, you can find it in the raw data in GBQ collected with OWOX BI and build a more accurate report on the viewed product lists.

Use cases for finance

Let’s say you want to collect more information about the customer and the product itself, along with bank product requests. With OWOX BI, you have more accurate conversion statistics.

4. Linking ad costs to sessions

OWOX BI allows you to calculate the real value of each session. With this - you can accurately calculate ROAS for new and returned users. Also, you can do the following actions:

- Compare the profitability of cohorts created by those customers who saw the banner and those who didn’t.

- Find out how much you spend and earn on each of the product groups.

- Evaluate the effectiveness of advertising for different regions, landing pages, mobile versions, and applications.

How it Works in Google Analytics 4

Google Analytics 4 provides functionality to manually import ad costs, but it may not offer granularity or session-level cost attribution as expected.

How it works in OWOX BI

OWOX BI automatically uploads ad costs to Google Analytics 4 and Google BigQuery, distributing them across sessions according to UTM tags.

This is why you know the cost of each session across 5 main UTM tags (source, medium, campaign, keyword, content), and you can build reports on CPC, CPA or ROAS on raw data. It improves your report accuracy and helps to allocate your marketing budget more efficiently.

Use case

With OWOX BI, you can find out how much you spent on each session and group costs and revenue by certain users, cohorts, or landing pages.

For example, you want to understand which groups of goods should be promoted to bring more profit because a product that attracts a user isn’t obligatory the same product that the user buys. You need to group your digital ad costs into categories to find the most effective product categories to promote.

This can be done with OWOX BI by transferring cost data from advertising services to GBQ and distributing costs across all sessions with UTM tags. In this case, both costs and income will be properties of the session.

Here is how you can group costs and revenue by session properties and manage marketing performance based on customer segments, product groups, and landing pages.

5. Retrospective data updating

With OWOX BI, there’s no need for any additional actions to evaluate order redemption, purchase returns, or to find out what a new subscriber has been doing on your site for the last three months.

Our service allows you to retrospectively update the data uploaded into Google BigQuery.

How it worked in Google Analytics Universal

In the standard GA Universal, you can only retrospectively update the order status if less than 4 hours have passed from making the order to the payment. Otherwise, the payment is recorded in GA as a new session.

For example, if you add a return on April 3 for the transaction made on March 18 in the report for March 18, you would still see the full transaction amount. Accordingly, the distribution of the value across the channels won’t be correct because of this mistake.

How it works in Google Analytics 4

In Google Analytics 4 (GA4), retrospective data updating is limited, as most changes do not apply retroactively.

However, GA4 event streaming to Google BigQuery enables users to update data directly in BigQuery. If you have your GAU data collected into BigQuery - you can combine GAU and GA4 data for historical data analysis, although this process can be challenging.

How it works in OWOX BI

In the tables that OWOX BI fills up with data in Google BigQuery, you can retrospectively update the following information:

- Transaction details — you can enter a return, partial return, or change the sum for any period you want (eg. 30 or 365 days).

- User Information — if a user has signed or logged into a website, his User ID will be rewinded and appointed for sessions that appeared during the last X days before the date of authentication.

- Cost data can be updated for any number of days of your choice.

Use cases for finance

If the user with the User ID has applied for credit today, this User ID appears in all previous sessions from the same device. You can use these sessions to estimate a user’s path to your order, to attribute revenue from advertising campaigns in a more accurate way, and to predict the conversion of other users.

Use cases for E-commerce

With OWOX BI, it’s easier for you to consider returns and measure the effectiveness of advertising campaigns —- streaming tables always show you the current order sum. If the return was on another day, you don’t need to search for it in the other day’s tables.

If you plan to build a ROPO (Research Online Purchase Offline) report, it’s easier to make calculations when the User ID is appointed to the maximum number of client’s historical sessions.

ROPO analysis: how the company determined the effectiveness of online advertising, taking into account both online and offline user actions.

6. The structure of data tables

As mentioned above, the export tables from Google Analytics 360 for GAU as well as for GA4 to Google BigQuery have too many nested fields, which increases the processing cost of this data.

How it worked in Google Analytics Universal

In Google Analytics Universal 360, when exporting data to Google BigQuery, the tables often include nested and repeated fields. These complex structures can increase the processing time and cost.

How it works in Google Analytics 4

The data structure in Google Analytics 4 is different but it still includes nested and empty fields, potentially affecting data processing costs.

How it works in OWOX BI

OWOX BI helps you optimize the costs of data storage and processing in GBQ. There are fewer blank fields in the streaming tables and fewer nesting levels, so the tables themselves and their queries “weigh” less.

Use case

As an e-commerce company using Google Analytics 4 (GA4), you might struggle with complex, nested data when exporting to Google BigQuery, leading to higher processing times and costs.

By switching to OWOX BI, you can simplify your data structure, reducing nesting and empty fields. This change results in more straightforward and efficient SQL queries, lower BigQuery costs, and faster access to clear insights about customer behavior and campaign effectiveness. OWOX BI's optimization of your data analytics can streamline your processes, making them more cost-effective and enhancing your ability to make quick, informed decisions.

7. Ability to collect data directly from the website into the Google BigQuery

OWOX BI helps you avoid limitations on data collection. If you plan to track all user activity — from viewing the banner to selecting additional services in the order — all additional hits can be sent directly to Google BigQuery.

How it worked in Google Analytics Universal

All information about the user interaction with your website is transmitted to Google Analytics through separate hits — messages to the GA server.

How it works in Google Analytics 4

Collects data through events to the server. While Google Analytics 4 offers more flexibility than previous versions, it might not match the direct data collection capabilities of OWOX BI.

How it works in OWOX BI

At the moment of event creation, OWOX BI replaces the address for sending data from the GA4 resource with the address of your project in Google BigQuery. We can duplicate all GA4-addressed hits into GBQ tables or block (fully or partially) all data in GA4 and send hits only to GBQ tables.

This solution has the following advantages:

- You get unsampled data from the website in the well-known structure of Google Analytics, with thousands of SQL queries that have already been written for it. It saves your time and decreases time spent on regular analysts consulting.

- You can set up data collection without lowering your website’s load speed. It’s possible because OWOX BI duplicates GA4 events rather than overloading your GTM with a separate JS counter.

- We guarantee SLA in the contract and have the necessary functionality to monitor data quality and automatically store information if your Google Analytics and Google Cloud project fails. You can be sure of the data quality for your reports without additional effort.

With OWOX BI, it’s also possible to collect user behavior data without the Google Analytics JS counter. We can collect events data from your website into Google BigQuery with our tracking code.

Use cases for banks

If you plan to collect sensitive data from the website (customer type, details, order parameters), you should send it directly to Google BigQuery, where the Tier 4 security standard regulates information protection. Any sensitive information may be transmitted in hashed or encrypted form.

Use cases for E-commerce

Let’s say there are more than 1,000 banners on your site. You plan to track views and clicks for each of them and measure how it affected the purchase in the current or following sessions. If you track the actual views of each banner, the amount of data that is sent to GA4 will exceed the limit by 20-25%.

With OWOX BI, you can send all banner statistics directly to Google BigQuery (bypassing GA) and get insights about the effectiveness of internal promo campaigns. So you don’t lose any information due to data sampling and aggregation in standard GA4 and going beyond the limits in GA 360.

Infact this solution works for different industries like entertainment too. Discover how a movie streaming service used advanced analytics with OWOX BI to boost content strategy, optimize marketing, and increase user engagement. This success story shows the real impact of aligning business goals with powerful analytics tools.

8. Unlimited number of user parameters and dimensions

With OWOX BI, you can transfer an unlimited number of user parameters and dimensions from the website to Google BigQuery. It allows you to segment users by any feature and build more detailed reports for in-depth analysis.

How it worked in Google Analytics Universal

Google Analytics also had restrictions on the number of custom metrics collected from the website: 20 for the standard version and 200 for the GA 360.

How it works in Google Analytics 4

GA4 provides flexibility in defining custom metrics and dimensions, allowing businesses to tailor their analytics to specific needs. While more flexible than previous versions, there are still limits on the number of custom metrics and dimensions that can be created.

How it works in OWOX BI

OWOX BI empowers you to effortlessly move an unlimited volume of user parameters and dimensions from your website to Google BigQuery. This capability enables you to segment users based on any feature, facilitating the creation of more detailed reports for comprehensive and insightful analysis.

Use case

If you're managing a large online retail business and seeking to deeply understand and segment your vast customer base, OWOX BI offers a robust solution. Unlike the constraints you might face with Google Analytics 4's limits on custom metrics and dimensions, OWOX BI allows you to transfer an unlimited number of user parameters and dimensions from your website to Google BigQuery. This feature empowers you to create highly detailed and customized user segments, tailoring your marketing strategies to specific customer behaviors and preferences. By leveraging this capability, you can produce more nuanced and insightful reports, leading to more effective marketing approaches and a better understanding of what your customers truly want.

9. OWOX User ID

OWOX User ID helps you to optimize your promotion and remarketing costs if you have a network of websites with intersecting audiences. You also can evaluate the Post-View effect of banner and media ads.

How it works

With the help of third-party cookies, OWOX BI puts the same identifier on different websites (it can be your other website on a different domain or media advertising on an independent advertising platform). Then, this ID brings together sessions of several websites, and you’ll be able to analyze your intersecting audiences.

Use case

If your group of companies consists of multiple brands and their websites, OWOX BI can measure the intersection of your audiences and show you how to optimize the budget for remarketing and outreach campaigns.

1+1 Digital case: how a major retailer linked video ad views to online and offline sales, which helped to more accurately calculate ROAS and CPA media ads.

10. Accurate user location

In our experience, the accuracy of determining a user’s geographic (Geo) position in OWOX BI is, on average, 20% higher than in similar GA4 reports.

How it worked in Google Analytics Universal

GA Universal defines a geolocation using the Google API. This method isn’t always reliable because it may not be supported or blocked in some browsers or by user actions (especially thanks to GDPR).

How it works in Google Analytics 4

GA4 uses Google's API for determining user geolocation, which is generally reliable but may vary in accuracy depending on the user's browser settings and other factors.

How it works in OWOX BI

OWOX BI determines geolocation using another Google service — Cloud Load Balancing. This service specializes in balancing network load and linking geo data within a specific IP address. Accordingly, streaming doesn’t require any user permission; we avoid blocking and thus obtain more accurate geodata.

Use case

As an online marketer, imagine needing precise geolocation data for your target audience. With Google Analytics (GA) and GA4, you might face challenges due to their reliance on Google's API, which can be less accurate due to browser settings or GDPR blocks. Switching to OWOX BI, you benefit from its use of Google Cloud Load Balancing for geolocation, which links geo data more accurately to IP addresses without user permission issues. This enhanced accuracy, being about 20% higher than GA, allows you to better target your campaigns and understand your audience's geographic distribution, leading to more effective marketing strategies and improved ROI.

11. Accurate source determination with direct website traffic

isTrueDirect is a parameter that specifies whether the session refers to direct website access (direct traffic) or must be attributed to another source.

How it worked in Google Analytics Universal

The isTrueDirect variable in GA is true when:

- It was a direct link to the website (the user typed the website address or used the bookmark).

- If the source, channel, and campaign parameters are fully matched for two consecutive visits. GA defines the second session as direct traffic, although it’s initiated by advertising.

How it works in Google Analytics 4

In Google Analytics 4 (GA4), determining direct website traffic, often referred to using parameters like isTrueDirect, is handled not more effectively than in previous versions of Google Analytics.

How it works in OWOX BI

In the OWOX BI tables, we define isTrueDirect = true only in the case of direct website access. It allows you to evaluate brand awareness accurately and to estimate the cost of a particular session correctly.

Use case

Reports with the isTrueDirect parameter help you measure the effectiveness of brand awareness ads. The growth of direct entries at the beginning of the user journey makes it possible to evaluate the contribution of offline and outreach campaigns for your marketing.

12. Personal data collection (PII)

Unlike in Google Analytics, you can collect and use PII customer data, including email and phone numbers, in Google BigQuery. Don’t lose users who didn’t reach the end of the funnel but tried to leave a request on the website.

Phone or email in GBQ can be used to segment users and send them feedback.

How it worked in Google Analytics Universal

GA doesn’t allow the transfer of personal data in unencrypted form. Otherwise, your account will be blocked.

How it works in Google Analytics 4

Google Analytics 4 adheres to strict guidelines regarding the collection of personal identifiable information (PII). Collecting PII is not allowed in GA4, and violations can lead to account suspension.

How it works in OWOX BI

In the OWOX BI tables, we use the &tel and &email parameters (they aren’t supported in GA) to transmit the personal data to BigQuery in an easy-to-use way.

Use cases for finance

You can collect data about users who tried to apply for a loan or other service on the website but, for some reason, they were unable to submit a form. Then, the call center can contact these clients and help them to finish the procedure by phone.

Use cases for E-commerce

If you don’t collect User IDs within your online and offline systems, then the phone number and email can be used to link the online and offline data of your users.

Learn more about omnichannel retailing: why and how to integrate online and offline customer touchpoints.

If you need a special reports according to your business needs and accounting system, a team of OWOX BI analysts can help you out. Book a quick demo and find out the details.

Frequently asked questions

In Google Analytics 4, data sampling refers to the process of analyzing a subset of traffic data instead of examining all user interactions on a website. This happens especially when dealing with large data sets or complex reports, to speed up the process of generating analytics insights.

The limitations of data sampling in Google Analytics 4:

- Sampling triggers for high-traffic sites or complex queries.

- May result in less accurate data representation.

- Limits in-depth analysis of all user interactions.

- Challenges in comparing data over long periods.

- Potential discrepancies between sampled and unsampled reports.

- Difficulties in granular audience segmentation.

- Restricted utility for precise A/B testing.

- Some detailed reports might be unavailable.

Data sampling can lead to less precise insights, impacting the effectiveness of data-driven decisions. It may not fully represent user interactions skewing analysis, especially in detailed or custom reports.

Alternatives include using smaller data segments, simplifying report parameters, or integrating with platforms like Google BigQuery to process and analyze complete, unsampled datasets.

Collecting complete data provides more accurate and detailed insights, enhancing the understanding of user behavior and improving the reliability of trend analysis and decision-making processes.

![How to Set up [GA4] to BigQuery Export](https://cdn.prod.website-files.com/676a9690ef4ec151a69571ff/67acc8f2b918886086b338f5_Group%202313670-min.avif)

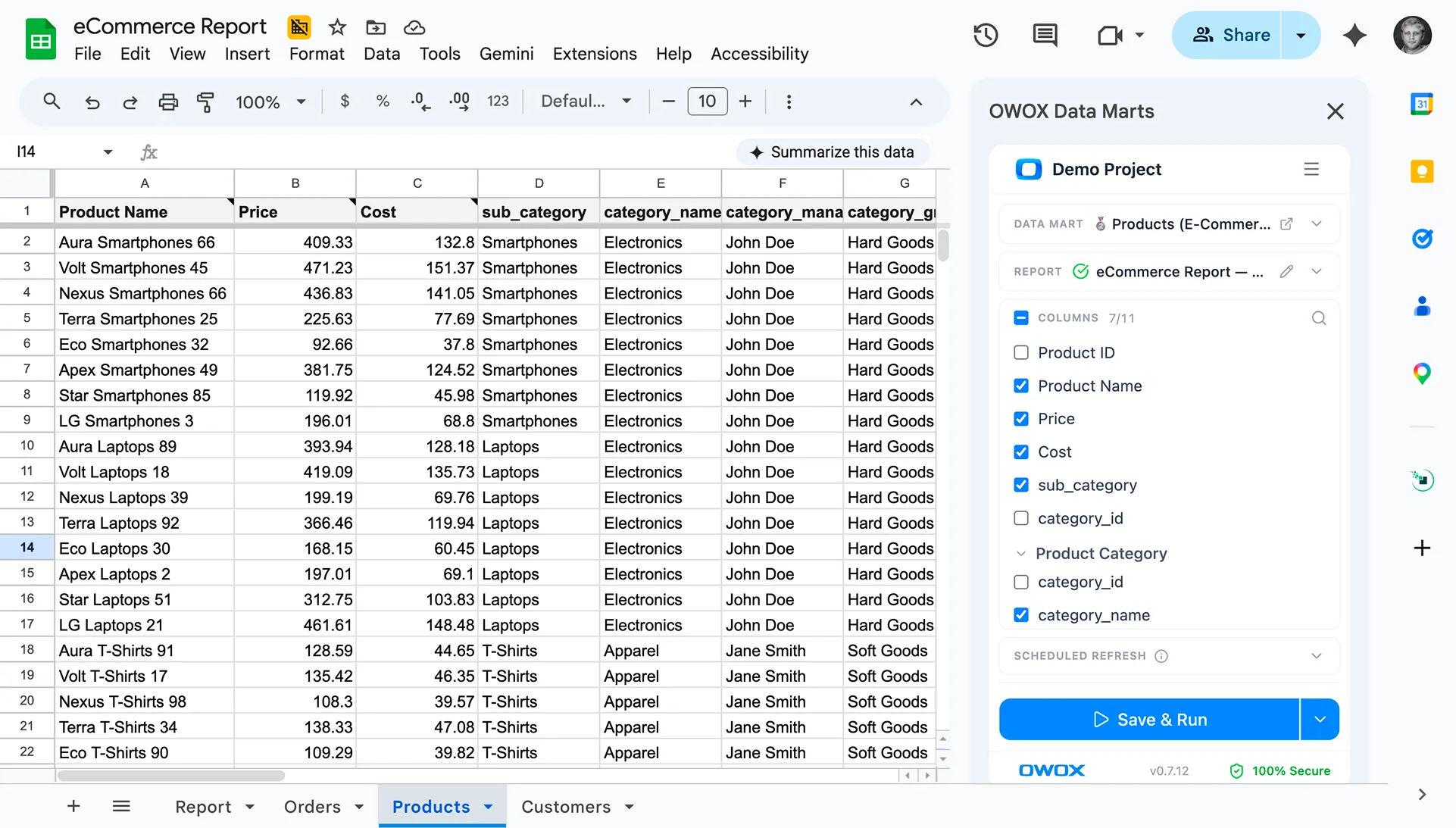

Finally, a tool that doesn't ask business users to learn a new dashboarding UI. Our marketing team already knows Sheets. OWOX just delivers the right data.

Joinable data marts concept was the thing that sold us. We can now use the semantic layer without building one.

Self-hosted the OSS version on Digital Ocean. Zero vendor lock-in. Contributed a Shopify connector back in week two.