A Comprehensive Guide On Measuring Your Online Advertising Effectiveness

Want to know how to measure online advertising effectiveness in 2025? Learn how to measure them effectively with a step-by-step guide

In this article, we’ll describe how to measure online advertising, talk about the tools and services for doing so, and explain why accurate measurements are so crucial. We’ve prepared step-by-step instructions for the most complicated parts so you can adapt them to your work. Uncover all the truth about the value of your online advertising!

From the day people started selling online, measuring internet advertising campaigns has been a headache for most marketers. How can businesses know if their marketing efforts bring profit or if they’re just splashing their budgets away? We’ll help you prove that your online ads are worth investing in and developing.

Note: This post was originally published in May 2019 and was completely updated in February 2024 for accuracy and comprehensiveness.

Understanding the basics of Ads Campaign Effectiveness Measurement?

Advertising campaign effectiveness measurement is a critical evaluation process that assesses whether a marketing campaign successfully reaches its target audience and achieves its intended outcomes. This evaluation is vital in the realm of marketing, a key component for any brand's success, which involves substantial effort, resources, and financial investment. The objective is to create campaigns that resonate deeply with the target demographic.

However, the process doesn't stop at launching a campaign. It's crucial to continuously monitor and measure ads performance. This is where the concept of advertising campaign effectiveness comes into play. It involves a rigorous analysis of various aspects of the campaign, such as its reach, engagement, conversion rates, and overall impact on the brand's image and sales.

The effectiveness of an advertising campaign is often gauged through both quantitative and qualitative measures. Quantitative data might include metrics like click-through rates, impressions, audience reach, and conversion rates. These numbers provide tangible evidence of how well the campaign is performing in terms of engaging and influencing the target audience.

Qualitative analysis, on the other hand, involves assessing the campaign's impact on brand perception, customer sentiment, and overall market position. This might involve gathering customer feedback, conducting surveys, or analyzing social media sentiment.

Understanding and measuring online advertising effectiveness is therefore not just about assessing past and current performance, but also about laying the groundwork for future marketing success.

Why do You Need to Measure the Effectiveness of Online Advertisement

The main reason why you have to measure the effectiveness of your online advertising campaigns is that any promotion is an investment. Any investor wants to know how their invested money is used and — what’s most important — when they can expect a return.

Learning how to measure digital advertising effectiveness offers numerous benefits, which are crucial for businesses and marketers in optimizing their digital marketing strategies. Here are some key benefits:

- Improved Return on Investment (ROI): By assessing how well online ads perform, companies can identify which campaigns are yielding a high return. This allows them to allocate their budgets more effectively, focusing on strategies that offer the best ROI.

- Optimization of Ad Content and Design: By analyzing which elements of an ad (like images, copy, and call-to-action) are most effective, companies can optimize their ad content and design for better engagement and conversion rates.

- Understanding Customer Journey: Effective measurement helps in tracking the customer journey across different touchpoints. This understanding can lead to more strategic ad placements and timings, enhancing the overall customer experience.

- Increased Customer Insights: Online ad effectiveness metrics can reveal insights about customer preferences and behaviors, providing valuable information for broader marketing and product development strategies.

- Budget Efficiency: Understanding which ads are effective helps in cutting costs on underperforming campaigns, ensuring that the advertising budget is spent efficiently.

- Long-term Strategic Planning: Long-term trends in ad performance data can inform broader marketing strategies and business decisions, aiding in sustainable growth and market positioning.

Measuring online advertising effectiveness is not just about understanding how ads perform; it's about leveraging that understanding to make smarter business decisions, connect more meaningfully with customers, and drive sustainable growth.

Metrics to Look for When Measuring Online Advertising

Metrics in online advertising provide tangible targets to strive for, allowing you to establish benchmarks for desired outcomes. By tracking your current performance metrics, you set the stage for future improvements. These metrics serve as a foundational tool for planning, enabling you to effectively monitor and refine your campaigns over time. They are essential for guiding your advertising strategy, helping you to anticipate changes, and adjust your approach for better results in subsequent campaigns. Let's look at some of the metrics to measure online advertising down below

Conversion Rate (CR)

Conversion Rate is a key metric in online advertising, indicating the effectiveness of your marketing in driving desired actions. A higher Conversion Rate often means lower customer acquisition costs and more efficient marketing. It also enhances website traffic from search engines like Google by reducing bounce rates and potentially improving rankings. Even small improvements in Conversion Rate can significantly increase revenue without raising costs.

Customer Lifetime Value (CLV)

Lifetime Value, also known as Customer Lifetime Value, is a metric used in advertising to estimate the total revenue a business can anticipate from a single customer throughout their relationship with the company. A higher Customer Lifetime Value (CLV) boosts profitability, as it not only increases earnings per customer but also justifies higher spending on customer acquisition and retention. Acquiring new customers is costlier than keeping existing ones, so a greater LTV makes each acquisition more valuable. This enables a larger marketing budget, which in turn can attract more new customers.

Return On Investment (ROI)

Return on Investment (ROI) measures the profit earned from a specific investment, like an ad campaign. It's a crucial metric in advertising, serving as a profitability indicator for business activities. ROI highlights which marketing initiatives are profitable by focusing on profit, not just revenue. This makes it particularly valuable for online advertising and planning marketing budgets, as it directs resources towards the most lucrative activities.

Cost Per Acquisition (CPA)

Cost Per Acquisition (CPA) is a metric that represents the total cost involved in gaining a new customer through a specific marketing campaign. To calculate the CPA, divide the total amount spent on the campaign by the number of new paying customers acquired. A lower CPA, particularly when compared to the customer's lifetime value, is an indicator of the effectiveness of your marketing strategies.

Return On Ad Spend (ROAS)

Return on Ad Spend (ROAS) measures the revenue generated for every dollar spent on advertising. It's a specific metric, unlike ROI, focusing on the efficiency of individual ads or channels rather than the entire campaign. ROAS is crucial as it assesses each component of an ad campaign, helping to identify the most profitable areas. It's particularly useful when expenses are linked to diverse activities, guiding optimization for maximum revenue.

Bounce Rate (BR)

Bounce Rate reflects the percentage of visitors who exit your website after viewing only one page, without engaging in any actions like clicking links or making purchases. It's a key indicator of content engagement, with a high bounce rate suggesting less compelling content. Crucially, it influences Google's RankBrain, affecting search rankings and traffic. A lower bounce rate often correlates with higher Google search rankings and increased site traffic.

Engagement Rate (ER)

Engagement Rate is a crucial metric in online advertising, representing the proportion of content interactions (like clicks, comments, likes, and shares) relative to its total views. This metric is significant as it indicates how effectively your content connects with the audience and stands out amidst the vast online content. A higher engagement rate suggests that your content resonates well, prompting more audience interaction.

Cost Per Click (CPC)

Cost Per Click (CPC) is a metric in online advertising that denotes the cost incurred for each click on an ad. In CPC or PPC (pay-per-click) advertising, costs are incurred each time an ad is clicked, typically determined by real-time bidding algorithms based on ad quality and bid amount. CPC is essential for its simplicity and directness, making it ideal for testing ad effectiveness and understanding the direct impact on digital marketing ROI.

6 Steps to Measure and Optimize Online Advertising Effectiveness

Although there isn't a one-size-fits-all method for measuring the effectiveness of advertising, there is a fundamental guideline to evaluate the impact of your ads within marketing initiatives. Here's an overview of the steps involved:

Start with Establishing Goals

Define "advertising effectiveness" in line with your team's goals. This step is critical as it shapes your understanding of a successful ad campaign. Ensure thoroughness in this process, engaging all relevant stakeholders.

Your definition will act as a guiding principle, steering your advertising strategy and helping in the assessment of your campaigns' success. Take your time to get this foundational aspect right, as it's the cornerstone of your advertising efforts.

Implement Systems for Data Collection and Analysis

Before launching your campaign, it's crucial to have the necessary data, technology, and processes for measuring progress toward your goals. This includes setting up methods to collect and maintain high-quality data to track advertising effectiveness over time, as well as robust marketing analytics capabilities to create a framework for evaluation.

The process is usually challenging.

Some teams opt for external agencies to handle data analytics, while others prefer specialized tools for more flexibility in analyzing insights and outcomes. The choice depends on your organization's priorities, the marketing team's skill set or the availability of external analytics resources.

Additionally, it's important to measure your advertisement's reach. Understand how many people will see your ad and gather information about these consumers, including what motivates them. The ease of this task varies with the advertising media channel. Digital platforms provide precise data on views and engagement, while traditional media like TV offers only approximations.

Each ad campaign may be unique in its creative idea, but all campaigns have much in common. They start on some kind of advertising platform (search engine, social media, websites, other services) and lead through CTA (call, buy, subscribe) to the target action on your website or landing page (a purchase, a subscription, a call). Everything is measurable.Your expertise in collecting, storing, and processing data will define the level at which you measure your online advertising. Let's look at the different levels to measure online advertising:

2.1. Ad Platforms level analysis

You can start by analyzing data at the level of your advertising service. Everything starts with the level of basic insights.

Depending on your advertising channel, you might choose the cozy Facebook Analytics, Twitter Analytics, or another branded analytics service specific to your advertising account. Typically, each advertising service gives a simple analytics page for you to explore.

But when you find yourself trying to combine data from separate pages for different advertising channels into a single performance or ROI report, it’s time to move away...

You may need to rely on these marketing metrics and reports on the analytics pages for your advertising channels, the metrics are:

- Reach, Engagement, Impressions

- Clicks, CTR, CPA, CPM

- Ad frequency

- Amount spent

- ROAS (if there’s a possibility to import transaction data)

The biggest trouble comes when it’s time to measure your advertising at advertising service level.

You can see a detailed report on Facebook on the day-to-day distribution of campaign costs, but what did you earn on those days? To know that, you have to dance on the tips of your toes and import conversion data or install additional tracking code wherever that data is stored.For example, to work closely with Facebook analytics and your website conversions, you should use Facebook Pixel to segment your audience and track conversions. The least you should do is add Pixel conversion code on all conversion pages.

To get goals and transactions in your Google Ads reports, you’ll need to take further steps:

- Establish a connection between your GA4 and Google Ads accounts.

- Switch on the automatic tagging function.

- Ensure that you’ve run ads targeted at existing types of goals and conversions.

After that, you’ll be able to see your conversion data in your Google Ads account. Frankly speaking, you can track conversion events even without Google Analytics 4. Check out some methods in Google’s official guide here.But even if you get the data, you’ll have some serious limitations of connection with other services and will likely have discrepancies between the data on your ad service analytics page and your true sales data. When launching a business, you should get past this level of advertising analytics as fast as possible. The bigger and more complicated your online advertising plan becomes, the less transparent your cost and profit attribution may be. This can lead to financial losses, which can be avoided by moving to the proper tool in time.

2.2. Google Analytics 4 advertising analysis

This is the next level, where we try to gather all the data and analyze the efficiency of our advertising efforts from the point of view of the website with the help of metrics and dimensions.

At this level, you gather all information about your conversion goals, import your costs from social media manually (or semi-manually with the help of Google Spreadsheets and a services API) and, after connecting Google Analytics 4 with Google Ads, almost forget about the headache with measuring online ads.

But here’s the thing. The data is aggregated all the time: you can slice it into segments, cohorts, and channels, but it’s an endless war. The data sampling isn’t sleeping, and if you hit the limit, your data will be hidden from you like a secret treasure.

We’ve been investigating the limitations and peculiarities of Google Analytics 4 for a long time.

Here are some guides for setting up and tuning Google Analytics 4 on your website:

- How to import cost data hassle-free

- Ecommerce guide for those who want to save time

- How to add CRM data to Google Analytics 4

All of CRM and cost data is needed to estimate the efficiency of your online advertising not only for the single advertising platform but also across other services.

To analyze online advertising costs in Google Analytics 4 (GA4), one must manually import relevant cost data commonly imported through CSV file and GA4 SFTP method. This includes ad spend across various platforms, like Google Ads, Facebook, and Instagram. GA4 then enables a comprehensive analysis of these expenses against advertising outcomes.

If typical reports don’t fit your needs, you should use custom reports where you can choose the parameters, filters, and data presentation to see all KPIs on one board.

In Google Analytics 4 (GA4), attribution modeling plays a crucial role in evaluating the effectiveness of advertising campaigns. GA4 offers a variety of attribution models that allocate conversion credit to different marketing touchpoints or channels based on specific rules or methodologies. These models help in understanding the impact and effectiveness of marketing efforts on driving conversions. Selecting the right attribution model in GA4 depends on your business goals, customer journey, and the nature of your marketing campaigns. Experimentation and analysis of different models can provide insights into the effectiveness of various marketing strategies and help optimize resource allocation.

Can the classic attribution models presented by Google Analytics 4 estimate all channels in synergy? Yes, it can, depending on GA4’s different attribution models, and the way these models attribute conversions varies depending on the scope (user-scoped, session-scoped, or event-scoped) and the specific model selected, but the accuracy is doubtful. Typically, each attribution model has its own logic, which is just a mathematical assumption and can’t be changed easily in response to reality. If you want a crash test for your attribution model, try to evaluate its cost efficiency.

All complaints aside, Google Analytics 4 fits for a certain period of growth. But we can’t say that Google Analytics 4 is a 100% accurate tool for evaluating advertising campaigns.

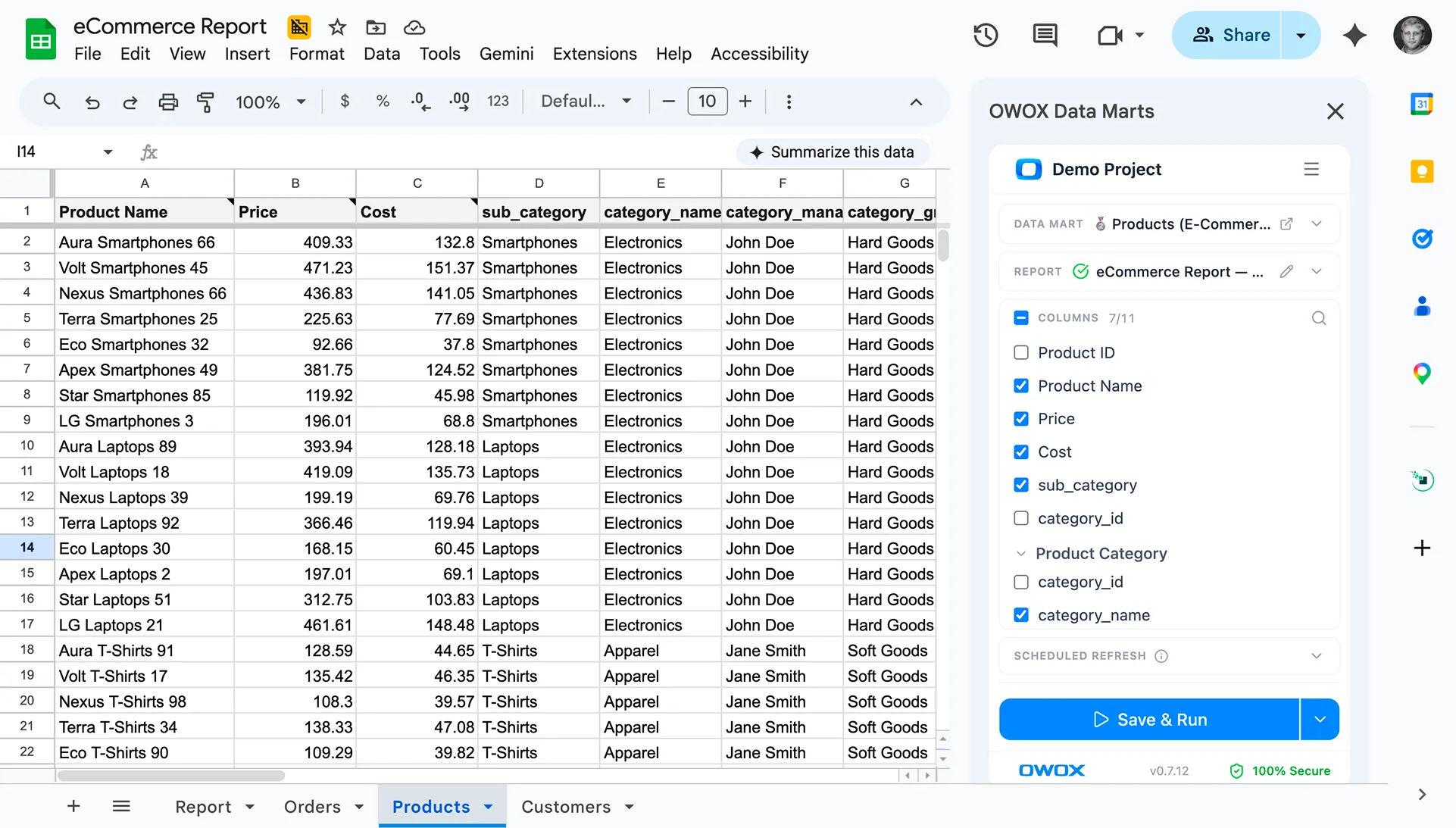

2.3. Marketing analytics with OWOX BI

Marketing analytics with OWOX BI is the best approach for those who aim at perfection. It helps you not only calculate the efficiency of online advertising but also see the whole situation with marketing and the business itself.

Here are three ways to use OWOX BI for evaluating online advertising in the framework of marketing analytics:

- Create a meaningful and transparent dataset including data from all of your advertising accounts (without size limits) with the help of OWOX BI Pipeline. This will help you create a pool of data that’s harmonized, aligned, and easy to process and analyze. You’ll be able to add CRM data and other offline data and get reports on your advertising efficiency, visualizing them in an interface you already know, such as Google Sheets or Looker Studio.

- Get a set of attribution models, including a transparent ML funnel-based attribution model that correctly distributes values for each touchpoint.

3. Determine Optimal Ad Frequency

Identifying the effective frequency involves deciding the right number of ad exposures needed for consumer action without overdoing it. Balance is crucial to avoid overwhelming or under-representing your message.

Leverage marketing technology to assess past ad performance and varying touchpoints. This analysis helps establish the ideal frequency that resonates with your audience, ensuring your message is impactful but not excessive.

4. Optimize Advertising Touchpoints

Keep a vigilant eye on your ad performance throughout the campaign. Adjust touchpoints that aren’t effectively leading to conversions. It’s important to recognize that some touchpoints indirectly assist in conversions, while others directly contribute.

Use a marketing attribution model that fairly recognizes the role of each touchpoint. Avoid over-reliance on first or last-click models, as they can miss significant interactions, ensuring a more accurate and holistic view of your ad campaign's effectiveness.

5. Evaluate Your Marketing Mix Strategy

Steering through the diverse array of marketing channels to identify the most effective combination can be challenging. Utilize marketing technologies to analyze how different channels interact and contribute to conversion optimization. Consider, for example, the impact of combining display ads on blogs with sponsored YouTube content on conversion rates. Tailor your approach to include a mix of channels that collectively resonate with your target audience, aiming for a harmonious and effective marketing mix.

6. Connect Campaign Results with Revenue Impact

Effective marketing goes beyond immediate profits, considering broader metrics like brand value and customer lifetime value. However, leadership often prioritizes direct financial impacts. Utilize platforms that connect these broader metrics to long-term revenue, demonstrating how media investments foster top-line growth and minimize waste. These tools should balance immediate sales with strategic brand and customer relationship development, offering a comprehensive view of how advertising influences both short-term gains and sustained financial health.

Marketing Analytics Reports for Measuring Online Advertising Efficiency

Here’s a complete list of reports for measuring your online advertising based on the data you can get after marketing analytics is established:

- All-in-one Performance Report

- Cohort analysis report

- SaaS-model Report

- ROPO report, including offline events and activities

- Any kind of custom reports that can be built in Google Analytics 4

- Reports on the customer conversion path, with all information about demographics and cross-device activity

All of these reports are a step forward to understanding the true value of your advertising campaigns and your overall marketing efforts. So that you can see how it works, we have prepared a selection of useful dashboards for marketers.

Optimizing Online Advertising with OWOX: Your Gateway to Measuring Effectiveness

Ad campaign measurement helps marketers in the world of digital marketing to understand how their ads are resonating with the audience, which campaigns are driving traffic and conversions, and how they are impacting the overall return on investment (ROI). This data-driven approach allows for continuous optimization of ad campaigns, ensuring that marketing budgets are allocated efficiently and that campaigns are tailored to meet the evolving needs and preferences of the target audience.

Discover how OWOX BI revolutionizes your approach to online advertising effectiveness. Our advanced analytics solutions provide deep insights into ad performance, audience behavior, and ROI optimization. With OWOX BI you will embrace data-driven decision-making, refine targeting strategies, and enhance your overall marketing efficiency. Join leading businesses in harnessing the power of OWOX BI for a comprehensive, accurate, and actionable understanding of your digital advertising campaigns.

Key takeaways

- Measuring online advertising is one of the main routine tasks of digital marketers and analysts. There are two ways of doing this: manually and automatically.

- The more complicated your advertising campaigns are, the more sophisticated a tool you’ll need for analyzing all activities.

- If you’re stuck at the advertising service level of analytics, you’re risking your advertising budget — even if you think everything is under control.

- Evolution to marketing analytics is unavoidable if your business is growing. Align your data earlier to save time and money on further steps toward implementing a marketing analytics system for your digital marketing.

Frequently asked questions

Customer Lifetime Value (CLV) estimates the total revenue a business can expect from a single customer over the duration of their relationship. This metric is pivotal in advertising as it helps in determining the long-term value of customers, guiding decisions on customer acquisition and retention budgeting.

Marketing analytics plays a critical role in optimizing online advertising by enabling the creation of a comprehensive dataset, including all advertising account data, CRM, and offline data.

Evaluating the marketing mix strategy involves analyzing the interaction and contribution of different marketing channels to optimize conversions. It's vital for identifying the most effective combination of channels, such as display ads and sponsored content, to resonate with the target audience.

You can track conversion rates by setting up conversion tracking in your advertising platform, such as Google Ads or Facebook Ads. This involves placing a tracking code on your website that tracks when users complete a desired action, such as making a purchase or filling out a form.

To determine ROI, you need to measure the revenue generated from your campaign and compare it against the cost of running the campaign. You can use analytics tools or reporting features in your advertising platform to calculate ROI.

To optimize your campaigns, you can test different ad creatives, adjust targeting settings, and analyze performance data to identify areas for improvement. It's also important to regularly review and adjust your budgets to ensure you're getting the best results for your ad spend.

.avif)

.png)