Understanding ETL: The Ultimate Guide 101

In this article, we analyze in detail what ETL is and why ETL tools are needed by analysts and marketers.

The more data from various sources a company collects, the greater its capabilities in analytics, data science, and machine learning. However, along with opportunities, worries grow associated with data processing and data analytics.

After all, before building reports and searching for insights, all this raw and disparate data must be processed: cleaned, checked, converted to a single format, and merged.

Extract, Transform, and Load (or ETL) processes and tools are used for these tasks. In this article, we analyze in detail what ETL is and why these tools are essential for analysts and marketers looking to harness the full potential of their data.

What is ETL?

ETL (Extract, Transform, Load) is the method of gathering data from multiple sources, converting it into a standardized format, and moving it to a destination, often a Data Warehouse, for valuable business insights. It involves extracting data using specific connectors and configurations, then transforming it through various operations such as filtering, aggregation, ranking, and applying business rules, all tailored to meet specific business needs.

A Brief History of How ETL Came About

ETL gained traction in the 1970s as companies started using multiple databases, creating a need to integrate data efficiently.

By the late 1980s, new data storage technologies provided access to data across various systems. However, many databases required vendor-specific ETL tools, causing different departments to adopt different solutions. This resulted in the constant need for adjusting scripts to handle various data sources. As data volumes grew, automated ETL processes emerged to reduce the need for manual coding.

What is the ETL Process?

The ETL process simplifies the task of pulling data from multiple sources, transforming it, and loading it into a data warehouse. A well-structured ETL workflow ensures that the data in the destination system is accurate, consistent, and ready for use by business users or applications. Having all relevant data in one place enables quick generation of reports and dashboards.

Traditionally, ETL processes required a staging area to temporarily store and organize data before it was moved to a central repository. However, with the rise of cloud-based data warehouses like Google BigQuery, Snowflake, and Amazon Redshift, the need for a separate staging area has lessened.

These platforms offer scalable, high-performance environments where transformations can be performed directly using SQL. Additionally, extracting data from SaaS applications has become more efficient with the use of APIs and webhooks.

The availability of pre-calculated OLAP summaries further streamlines data analysis, making the process faster and more straightforward.

3 Steps in the ETL Process

The ETL process consists of three steps: extract, transform, and load. Let’s take a close look at each of them.

Step 1. Extract Data

At this step, extracted data (structured and partially structured) from different sources is placed in an intermediate area (a temporary database or server) for subsequent processing.

Sources of such data may be:

- Websites

- Mobile devices and applications

- CRM/ERP systems

- API interfaces

- Marketing services

- Analytics tools

- Databases

- Cloud, hybrid, and on-premises environments

- Flat files

- Spreadsheets

- SQL or NoSQL databases

- Email providers

Data collected from different sources is usually heterogeneous and presented in different formats: XML, JSON, CSV, and others.

Therefore, before extracting it, you must create a logical data map that describes the relationship between data sources and the target data.

At this step, it’s necessary to check if:

- Extracted records match the source data

- Spam/unwanted data will get into the download

- Data meets destination storage requirements

- There are duplicates and fragmented data

- All the keys are in place

Data can be extracted in three ways:

- Partial extraction — The source notifies you of the latest data changes.

- Partial extraction without notification — Not all data sources provide an update notification; however, they can point to records that have changed and provide an excerpt from such records.

- Full extraction — Some systems cannot determine which data has been changed at all; in this case, only complete extraction is possible. To do that, you’ll need a copy of the latest upload in the same format so you can find and make changes.

This step can be performed either manually by analysts or automatically. However, manually extracting data is time-consuming and can lead to errors. Therefore, we recommend using tools like OWOX that automate the ETL process and provide you with high-quality data.

Step 2. Transform Data

At this step, raw data collected in an intermediate area (temporary storage) is converted into a uniform format that meets the needs of the business and the requirements of the target data storage.

This includes both structured and unstructured data, ensuring comprehensive data analysis.

This approach — using an intermediate storage location instead of directly uploading data to the final destination — allows you to roll back data if something suddenly goes wrong quickly.

Data transformation can include the following operations:

- Cleaning — Eliminate data inconsistencies and inaccuracies.

- Standardization — Convert all data types to the same format: dates, currencies, etc.

- Deduplication — Exclude or discard redundant data.

- Validation — Delete unused data and flag anomalies.

- Re-sorting rows or columns of data

- Mapping — Merge data from two values into one or, conversely, split data from one value into two.

- Supplementing — Extract data from other sources.

- Formatting data into tables according to the schema of the target data storage

- Auditing data quality and reviewing compliance

- Other tasks — Apply any additional/optional rules to improve data quality; for example, if the first and last names in the table are in different columns, you can merge them.

Transformation is perhaps the most important part of the ETL process. It helps you improve data quality and ensures that processed data is delivered to storage in a fully compatible manner and ready for use in reporting and other business tasks.

In our experience, some companies still don’t prepare business-ready data and build reports on raw data. The main problem with this approach is endless debugging and rewriting of SQL queries. Therefore, we strongly recommend not ignoring this stage.

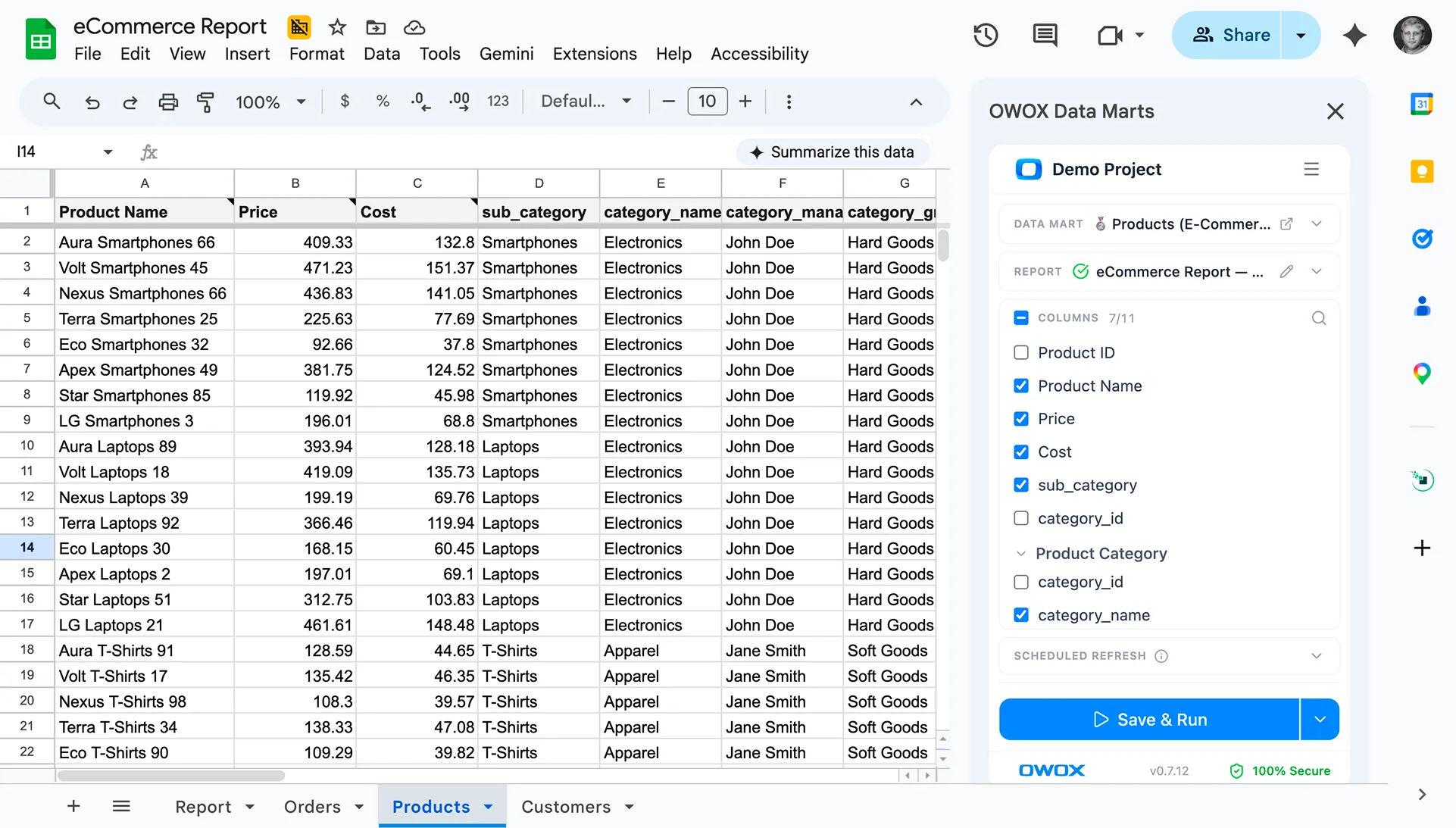

OWOX automatically collects raw data from different sources and converts it into a report-friendly format (with SQL transformation templates). You receive ready-made datasets that are automatically transformed into the desired structure, taking into account nuances important for marketers.

You won’t have to spend time developing and supporting complex pipelines, delving into the data structure, and spending hours looking for the causes of discrepancies.

Step 3. Load Data

At this point, processed data from the staging area is uploaded to the target database, storage, or data lake, either locally or in the cloud.

This provides convenient access to business-ready data to different teams within the company.

There are several upload options:

- Initial load — Fill all tables in data storage for the first time.

- Incremental load — Write new data periodically as needed. In this case, the system compares incoming data with what is already available and creates additional records only if it detects new data. This approach reduces the cost of processing data by reducing its volume.

- Full update — Delete table contents and reload the table with the latest data.

You can perform each of these steps using ETL tools or manually using custom code and SQL queries.

ETL Pipeline

An ETL pipeline is a series of processes designed to extract data from multiple sources, transform it into a standardized format, and load it into a centralized database or data warehouse. These pipelines are essential for integrating data from various sources, ensuring it is in a consistent format for analysis and reporting.

ETL pipelines are particularly valuable for handling large volumes of data, performing data quality checks, and ensuring data governance and security. By automating these processes, ETL pipelines help organizations maintain high data quality and streamline their data integration efforts.

ETL Pipeline vs Data Pipeline

While the terms “ETL pipeline” and “data pipeline” are often used interchangeably, there is a subtle difference between the two. A data pipeline is a broader term that encompasses any set of processes, tools, or actions used to ingest data from various sources and move it to a target repository.

An ETL pipeline, however, is a specific type of data pipeline that involves extracting, transforming, and loading data into a target system. In essence, all ETL pipelines are data pipelines, but not all data pipelines follow the ETL process. Understanding this distinction helps organizations choose the right approach for their data integration needs.

Types of ETL Pipelines

There are several types of ETL pipelines, each suited to different data integration needs:

The first one is the ‘batch processing’ pipelines. These are used for traditional analytics and business intelligence use cases, where data is periodically collected, transformed, and moved to a cloud data warehouse. Batch processing is ideal for handling large volumes of data that do not require real-time updates.

Next, ‘real-time processing’ pipelines which are designed for real-time data integration, where data is ingested from streaming sources such as IoT devices, social media feeds, and sensor data. Real-time processing ensures that data is available for analysis and decision-making as soon as it is generated.

And finally, the ‘Cloud ETL’ pipelines are used for cloud-based data integration, where data is extracted from cloud-based sources, transformed, and loaded into a cloud data warehouse.

Cloud ETL pipelines leverage the scalability and flexibility of cloud platforms to handle large and complex data integration tasks efficiently.

Advantages of ETL

ETL stands as a foundational technique for data management, facilitating the seamless aggregation and refinement of data across diverse systems.

Save Time with Automated ETL

The biggest benefit of the ETL process is that it helps you automatically collect, convert, and consolidate data. You can save time and effort and eliminate the need to manually import a huge number of lines.

Simplify Complex Data with ETL

Over time, your business has to deal with a large amount of complex and diverse data: time zones, customer names, device IDs, locations, etc. Add a few more attributes, and you’ll have to format data around the clock. In addition, incoming data can be in different formats and of different types. ETL makes your life much easier.

Minimize Human Error Risks

No matter how careful you are with your data, you are not immune to mistakes. For example, data may be accidentally duplicated in the target system, or a manual input may contain an error. By eliminating human influence, an ETL tool helps you avoid such problems.

Enhance Decision-Making

By automating critical data workflows and reducing the chance of errors, ETL ensures that the data you receive for analysis is of high quality and can be trusted. And quality data is fundamental to making better corporate decisions.

Increase ROI

Because it saves you time, effort, and resources, the ETL process ultimately helps you improve your ROI. In addition, by improving business analytics, you increase your profits. This is because companies rely on the ETL process to obtain consolidated data and make better business decisions.

Challenges of ETL

Challenges in the ETL process arise at every stage:

Extract

- Incompatible Data Sources: Managing various data formats and evolving APIs from multiple SaaS applications can complicate extraction.

- Constant Monitoring: Continuous oversight is needed to detect errors, prevent data loss, and ensure scripts run effectively.

- Granular Control: Handling sensitive data requires strict compliance with security and regulatory standards.

Transform

- Ad-hoc Data Sources and Formats: Managing varied data formats like CSVs, spreadsheets, and cloud storage often makes the process error-prone.

- Complex Data Transformations: Different data structures demand resource-intensive and time-consuming transformations.

Load

- Data Quality and Validation: Ensuring data accuracy and freshness is vital, requiring reliable, self-healing pipelines with additional checks.

- Schema Modification: Regular updates to the data warehouse schema must be managed carefully when loading data.

- Order of Insertion: Managing foreign key constraints and ensuring proper data loading order is essential for maintaining data integrity.

ETL vs ELT: Key Differences

ELT (Extract, Load, Transform) is essentially a modern look at the familiar ETL process in which data is converted after it’s loaded to storage.

Traditional ETL tools extract and convert data from different sources before loading it into storage. With the advent of cloud storage, there’s no need to clean up data at the intermediate stage between the source and target data storage locations.

ELT is particularly relevant to advanced analytics. For example, you can upload raw data into a data lake and then merge it with data from other sources or use it to train prediction models. Keeping data raw allows analysts to expand their capabilities. This approach is fast because it leverages the power of modern data processing mechanisms and reduces unnecessary data movement.

Which should you choose? ETL or ELT? If you work locally and your data is predictable and comes from only a few sources, then traditional ETL will suffice. However, it’s becoming less and less relevant as more companies move to cloud or hybrid data architectures.

5 Keys to a Successful ETL Implementation

If you want to implement a successful ETL process, follow these steps:

Step 1: Identify Data Sources

Identify the sources of the data you wish to collect and store. These sources can be SQL relational databases, NoSQL non-relational databases, software as a service (SaaS) platforms, or other applications. Once the data sources are connected, define the specific data fields you want to extract. Then accept or enter this data from various sources in raw form.

Step 2: Unify Data

Unify this data using a set of business rules (such as aggregation, attachment, sorting, merge functions, and so on).

Step 3: Load Data

After transformation, the data must be loaded to storage. At this step, you need to decide on the frequency of data uploading. Specify whether you want to record new data or update existing data.

Step 4: Validate Data Transfer

It’s important to check the number of records before and after transferring data to the repository. This should be done to exclude invalid and redundant data.

Step 5: Automate ETL Process

The last step is to automate the ETL process using special tools. This will help you save time, improve accuracy, and reduce the effort involved in restarting the ETL process manually. With ETL automation tools, you can design and control a workflow through a simple interface. In addition, these tools have capabilities such as profiling and data cleaning.

Choosing the Right ETL Tool

To begin with, let’s figure out what ETL tools exist. There are currently four types available. Some are designed to work in a local environment, some work in the cloud, and some work in both environments.

Which to choose depends on where your data is located and what needs your business has:

- ETL tools for batch processing of data in local storage.

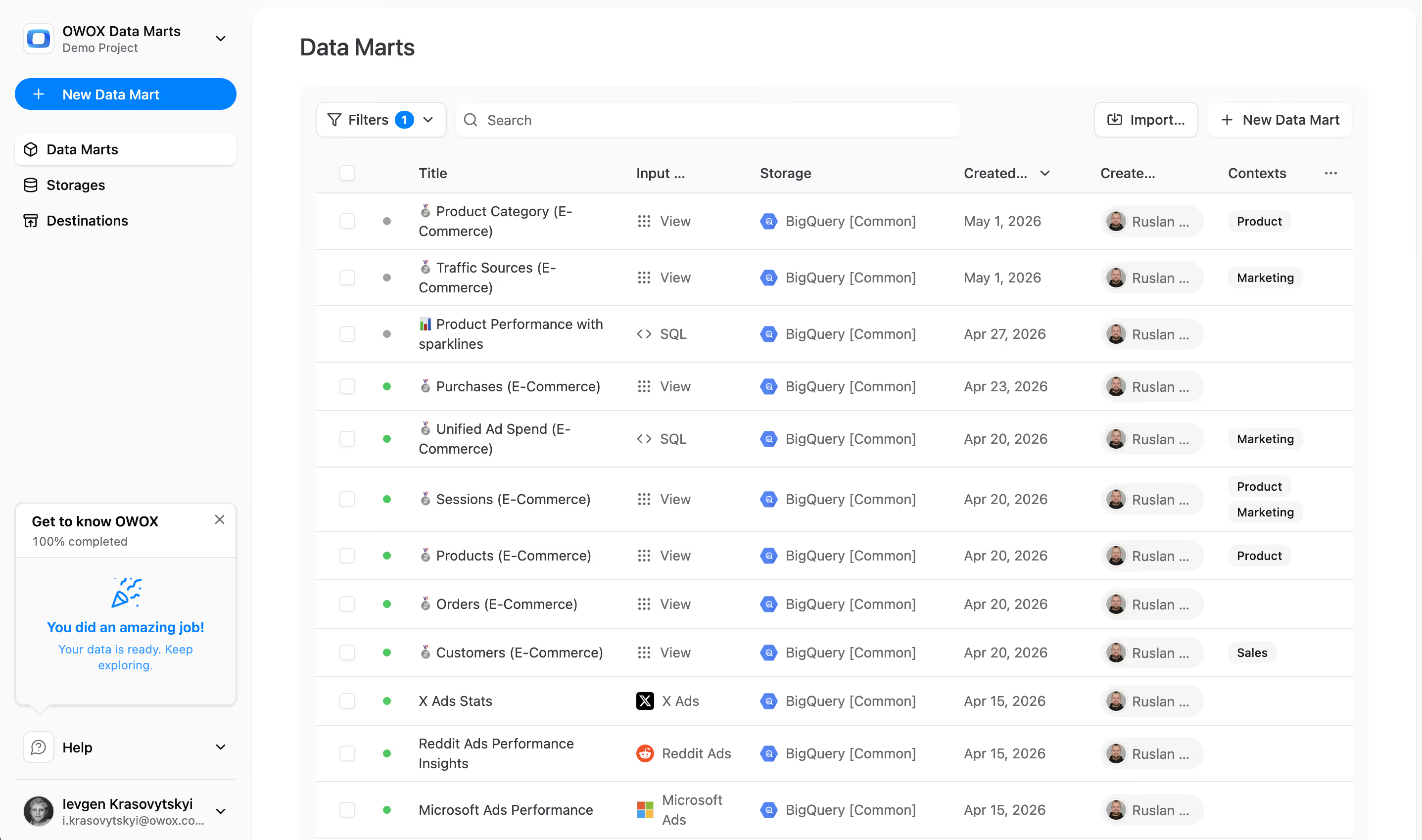

- Cloud ETL tools that can extract and load data from sources directly to cloud storage. They can then transform the data using the power and scale of the cloud. Example: OWOX BI.

- ETL open-source tools such as Apache Airflow, Apache Kafka, and Apache NiFi are a budget alternative to paid services. Some don’t support complex transformations and may have customer support issues.

- Real-time ETL tools. Data is processed in real time using a distributed model and data streaming capabilities.

Key Factors in Choosing an ETL Tool

- Ease of use and maintenance

- Speed of work

- Level of security

- The number and variety of connectors needed

- Ability to work seamlessly with other components of your data platform, including data storage and data lakes

ETL/ELT and OWOX BI

With OWOX BI, you can collect marketing data for reports of any complexity in secure Google BigQuery cloud storage without the help of analysts and developers.

What you get with OWOX BI:

- Automatically collect data from various sources

- Automatically import raw data into Google BigQuery

- Clean, deduplicate, monitor the quality of, and update data

- Prepare and model business-ready data

- Build reports without the help of analysts or SQL knowledge

OWOX BI frees up your precious time, so you can pay more attention to optimizing advertising campaigns and growth zones.

You no longer have to wait for reports from an analyst. Get ready-made dashboards or an individual report that are based on simulated data and are suitable for your business.

With OWOX BI’s unique approach, you can modify data sources and data structures without overwriting SQL queries or re-ordering reports. This is especially relevant with the release of the new Google Analytics 4.

Key Takeaways

The volumes of data collected by companies are getting bigger every day and will continue to grow. It’s enough to work with local databases and batch downloading for now, however, very soon it won’t satisfy business needs. So, the possibility to scale ETL processes comes in handy and is particularly relevant to advanced analytics.

The main advantages of ETL tools are:

- saving your time

- avoiding manual data processing

- making it easy to work with complex data

- reducing risks associated with the human factor

- helping improve decision-making

- increasing ROI

When it comes to choosing an ETL tool, think about your business's particular needs. If you work locally and your data is predictable and comes from only a few sources, then traditional ETL will suffice. But don’t forget that more and more companies are moving to cloud or hybrid architectures, and you have to take this into account.

Frequently asked questions

Some challenges associated with ETL include staying up-to-date with changing data sources and ensuring data security during the extraction, transformation, and loading process. Additionally, ETL can be complex and may require specialized knowledge or expertise to set up and maintain.

ETL can help businesses improve data accuracy, save time and effort by automating the data integration process, reduce data duplication, and improve overall data quality.

Some common ETL tools include Apache Nifi, Apache Spark, Talend Open Studio, Pentaho Data Integration, and Microsoft SQL Server Integration Services.

Finally, a tool that doesn't ask business users to learn a new dashboarding UI. Our marketing team already knows Sheets. OWOX just delivers the right data.

Joinable data marts concept was the thing that sold us. We can now use the semantic layer without building one.

Self-hosted the OSS version on Digital Ocean. Zero vendor lock-in. Contributed a Shopify connector back in week two.